AI in healthcare can improve chronic disease care, but bias remains a critical challenge. Flawed data and design choices often lead to unequal care, especially for underrepresented groups. For example, a 2019 study revealed racial bias in a risk-prediction algorithm, which underestimated Black patients’ health needs, reducing their enrollment in care programs from 46.5% to 17.7%. Addressing bias is essential to ensure AI benefits all patients equally.

Here’s how to tackle bias in AI for chronic disease care:

- Diverse Data Collection: Include patients of different races, ages, and socioeconomic backgrounds. Avoid proxy variables like healthcare costs that can misrepresent needs.

- Fair Training Practices: Use fairness metrics and eliminate misleading correlations. Test models across subgroups to ensure consistent performance.

- Continuous Monitoring: Regularly review AI outcomes to catch new biases or data drift. Establish fairness checkpoints and track demographic-specific results.

- Human Oversight: Clinicians must critically evaluate AI recommendations to avoid over-reliance or dismissal of alerts.

The Hidden Bias in Medical AI Algorithms

sbb-itb-44aa802

What Is Bias in AI for Chronic Disease Care

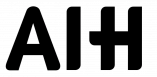

Common Types of AI Bias in Healthcare and Their Impact on Chronic Disease Care

Bias in healthcare AI happens when predictions systematically differ across patient groups, leading to unequal care. In chronic disease management, this can mean an algorithm might consistently misjudge risks – underestimating them for some groups while overestimating for others. These issues often stem from flaws in how the model is designed.

The root of the problem often lies in the data. If training datasets are dominated by certain demographics – like non-Hispanic Caucasian patients – the AI ends up learning patterns that work well for that group but fail for others. This phenomenon, known as "algorithm underestimation", means the model lacks the depth to make accurate predictions for underrepresented patients. Without diverse data, the system struggles to serve everyone equally.

Dr. Ted A. James from Harvard Medical School sums it up well:

"AI is more than a tool; it’s a mirror reflecting our collective values and biases."

The consequences of these biases are both measurable and concerning. A 2023 review of 48 healthcare AI models found that half carried a high risk of bias, while only 20% were considered low-risk. When such flawed models are used in chronic disease care, they can misdirect resources, delay necessary treatments, and even deepen existing healthcare disparities.

Common Types of AI Bias

AI bias can take many forms, and each can have serious repercussions in chronic disease care:

- Selection Bias: This happens when the training data doesn’t reflect the full diversity of the patient population. As a result, the AI performs poorly for underrepresented groups.

- Algorithmic Bias: This occurs when developers rely on proxy variables instead of actual health indicators. For example, they might use healthcare spending as a stand-in for illness severity. Since marginalized groups often spend less on healthcare due to access issues, the model underestimates their needs.

Dr. Kadija Ferryman from Johns Hopkins Bloomberg School of Public Health highlights the problem:

"In some groups, health care expenditure is a pretty good proxy for illness. But in other groups, it’s not."

- Confirmation Bias: Developers may unintentionally favor data that aligns with their assumptions about a disease or population. This can lead to models that reinforce stereotypes rather than accurately predicting outcomes.

Biases can also emerge during deployment:

- Automation Bias: Clinicians might overly trust AI recommendations, even when they conflict with clinical evidence.

- Dismissal Bias: High false-positive rates can lead providers to ignore AI alerts, potentially missing critical warnings about a patient’s condition.

Recognizing these types of bias is the first step in addressing their impact on chronic disease care.

How Bias Affects Chronic Disease Management

The effects of biased AI are very real in chronic disease care. One study revealed that racial bias in a widely used risk-stratification algorithm reduced the care provided to Black patients by over 50%. This misallocation of resources denies patients access to essential programs and delays life-saving interventions.

A striking example involves a kidney function algorithm that used race-based corrections. For Black patients, it produced higher (and misleading) health estimates compared to White patients with identical symptoms. This directly delayed organ transplant referrals for Black patients. Such outcomes highlight how flawed data and design choices can have life-altering consequences.

Chronic disease management is especially vulnerable because it relies heavily on algorithms for tasks like risk assessment and resource allocation. When these models are biased, they exclude vulnerable groups from critical care. For instance, a diabetes patient might miss out on intensive monitoring, or someone with heart disease could be labeled "low-risk" despite clear warning signs. In these cases, the AI becomes a barrier to proper care.

Models trained on historical data often replicate past healthcare inequalities, perpetuating disparities in chronic disease outcomes.

| Bias Type | Manifestation in Care | Impact on Chronic Care |

|---|---|---|

| Imbalanced Sample Size | Worse performance for minorities | Underestimates disease severity in underrepresented groups |

| Missing Social Determinants | Inaccurate risk predictions for low-income patients | Overlooks barriers like housing, food security, or transportation |

| Measurement Bias | AI learns patterns from specific hardware | Models may fail when used with different devices or scanners |

| Feedback Loop Bias | AI reinforces its own errors | Clinician reliance on biased recommendations creates a cycle |

Understanding these sources of bias is critical as we move toward reducing their influence and improving outcomes in chronic disease care. Up next, we’ll dive into practical steps to address these challenges in AI models.

How to Reduce Bias in AI Models

Reducing bias in AI models takes intentional effort at every stage of their lifecycle, from development to deployment. Healthcare providers and developers have identified effective ways to create fairer AI systems, focusing on three key areas: building diverse datasets, ensuring fairness during training, and monitoring performance continuously. Let’s break down these strategies.

Collecting Better Data from Diverse Patient Groups

Creating fair AI begins with collecting data that accurately represents the population it serves. When certain groups or conditions dominate the dataset, predictions can become skewed. Stratified sampling can help by ensuring no demographic or medical condition is over- or under-represented. This means deliberately including patients from different races, age groups, income brackets, and regions.

Early collaboration with medical professionals and patient advocates is also critical. For instance, if you’re developing an AI for diabetes management, working with endocrinologists and patients from underserved communities can help identify overlooked data points or gaps.

Data preprocessing plays a huge role in reducing bias. Some features, like zip codes, can unintentionally act as stand-ins for race or socioeconomic status, introducing unfairness. Tools such as Microsoft’s Fairlearn can remove these correlations using techniques like CorrelationRemover. Other methods, like reweighting or oversampling, can balance datasets by giving underrepresented groups more significance during training.

For missing data, avoid relying on simple averages, which can erase important subgroup distinctions. Instead, use stratified imputation to maintain patterns within specific demographics, such as age ranges or disease stages.

Fima Furman from Towards Data Science sums it up well:

"AI bias refers to discrimination when AI systems produce unequal outcomes for different groups due to bias in the training data."

Once data diversity is addressed, the focus shifts to embedding fairness into the training process.

Training and Testing Models for Fairness

Measuring and correcting bias during training is essential. Developers can use fairness metrics like Statistical Parity Difference, Average Odds Difference, and Equal Opportunity Difference. Ideally, these metrics should approach zero, indicating balanced outcomes. Tools such as Fairlearn and AI Fairness 360 are designed to assess and reduce bias at this stage.

Fairness can also be integrated into the model’s optimization process. In-processing techniques like regularization methods or reweighting underrepresented samples can ensure the model pays attention to all groups equally. Removing proxy features during preprocessing further supports unbiased training by eliminating misleading correlations.

Studies have shown that shifting algorithms to rely on direct health indicators, rather than proxy variables, can significantly improve inclusion and reduce disparities in care for chronic diseases.

Validation is equally important. AI models must be tested across diverse demographic subgroups to confirm consistent performance. For example, a review of 555 neuroimaging-based AI models revealed that only 15.5% included external validation, and 83% were rated as having a high risk of bias.

Transparency is another critical factor. According to the U.S. Department of Health and Human Services:

"All relevant individuals should understand how their data is being used and how AI systems make decisions; algorithms, attributes and correlations should be open to inspection."

Using model cards – detailed summaries of datasets and training processes – can help provide this clarity and build trust.

Monitoring AI Performance Over Time

Deploying an AI model isn’t the final step – it’s just the beginning. Continuous monitoring is crucial because patient populations and clinical environments evolve, which can introduce new biases over time, a phenomenon known as data drift.

Healthcare organizations should establish checkpoints where models undergo fairness reviews before continued use. Creating an algorithm inventory allows for systematic, periodic evaluations of all active models. Regular monitoring should include comparing outcomes across demographic subgroups to ensure consistent clinical results and resource allocation. Revisiting fairness metrics from the training phase helps maintain equity.

For example, if an AI system starts showing different accuracy rates for racial groups, it should trigger an immediate investigation. As JAMA Network Open notes:

"After an algorithm is deployed, continuous monitoring for performance and data drift is necessary. Monitoring should assess the fairness and equity of the algorithm output as well as the impact of the algorithm on patients, populations, and society."

Practical steps for monitoring include maintaining audit logs, tracking how AI recommendations influence clinical decisions, and setting clear stopping rules – criteria for when an algorithm should be taken offline if it fails fairness standards .

Finally, human oversight remains essential. Clinicians must review AI recommendations critically to catch errors or biases in real time, ensuring that technology enhances patient care without replacing sound clinical judgment.

Adding Bias-Reduction Tools to Clinical Workflows

Incorporating tools to address bias directly into clinical workflows is crucial for ensuring fair and equitable healthcare. This means healthcare providers must have practical methods to integrate fairness into everyday tasks, such as patient monitoring and treatment decisions. Achieving this requires both the right technology and a well-structured, multidisciplinary team.

Using AIH LLC‘s aiSpine and aiRing for Better Monitoring

Smart wearable devices like AIH LLC’s aiSpine and aiRing offer promising solutions for chronic disease monitoring. These devices collect real-time health data – aiSpine tracks posture patterns, while aiRing monitors vital signs – directly from patients in their everyday environments. This approach reduces reliance on sporadic clinical visits, which may miss critical day-to-day variations.

However, the effectiveness of these tools hinges on local validation. A device designed for one clinical setting may not perform equally well in another due to differences in patient demographics, environmental conditions, or care practices. Before widespread use, these devices must be validated across diverse patient groups. For instance, aiSpine should be tested on individuals with varying body types and mobility challenges to ensure its posture monitoring remains accurate and beneficial for all users.

The AIH Health App‘s historical data analysis features can further support bias reduction. By enabling clinicians to track patterns across different patient subgroups, the app helps identify disparities in monitoring quality or alert accuracy. This prevents situations where overall metrics appear favorable, but certain populations experience subpar care. These tools enhance continuous monitoring efforts by tailoring bias detection to specific groups in real time.

At the same time, providers must be mindful of deployment-specific biases. For example, automation bias can occur when clinicians overly rely on AI-generated suggestions without critical review. Similarly, alert fatigue – caused by excessive false positives – can lead to important warnings being ignored. With aiRing’s vital sign monitoring, clinical teams should establish clear protocols to determine when to trust automated alerts and when to perform manual verification, especially during the early stages of implementation.

Working with AI Experts and Healthcare Teams

Reducing bias requires early and ongoing collaboration among diverse experts. Teams should include clinicians familiar with patient needs, data scientists who can assess algorithmic fairness, bioethicists to address equity concerns, and representatives from the patient communities being served. This multidisciplinary approach helps identify potential blind spots that more homogeneous teams might overlook.

Collaboration should begin during problem formulation, well before selecting or deploying any AI tool. Healthcare teams and AI experts must align on the primary goal – whether it’s achieving equitable outcomes or optimizing for other metrics, such as cost efficiency. Studies have shown that using proxy variables like healthcare costs instead of direct health indicators can lead to significant disparities in care distribution among demographic groups.

Transparency is another critical element. Tools like model cards or dataset datasheets can document data sources, demographic representation, and known limitations. When implementing AIH LLC’s devices, clinical teams should work closely with the company to understand the populations included in the training data and assess whether local validation is necessary for their patient mix.

Governance structures also play a key role. Establishing oversight committees and checkpoint gates ensures that bias considerations are reviewed at multiple stages – before deployment, during the initial rollout, and throughout ongoing use. As the World Health Organization‘s Jarbas Barbosa da Silva Jr emphasizes:

"Addressing algorithmic bias should be treated as a core health quality standard, comparable in importance to safety and efficacy evaluations, to ensure consistent performance across all segments of the population."

Finally, continuous monitoring is essential for maintaining fairness over time. Healthcare teams should set up regular review cycles where AI experts analyze performance data across demographic subgroups, keeping an eye out for signs of drift or emerging disparities. This isn’t a one-time task – it’s an ongoing collaboration to ensure AI tools for chronic disease monitoring remain effective and equitable for all patients.

Examples of Successful Bias Reduction

Real-world examples illustrate how targeted efforts to reduce bias can improve both fairness and accuracy in chronic disease monitoring.

Example: Reducing Bias in Spine Health Monitoring

In March 2026, a multicenter study led by Dr. Teng Zhang from the University of Hong Kong and Dr. Guilin Chen from Peking Union Medical College Hospital tackled measurement bias in AI-based spine alignment assessments. The team introduced a real-time, plug-and-play data transformation method to harmonize 3,899 radiographs collected between January 2012 and August 2024 from seven hospitals across Hong Kong and Mainland China. By standardizing pixel-level variations using unsupervised histogram-based profiling, they addressed inter-device inconsistencies across systems from manufacturers like EOS, Philips, and GE.

The outcome? The enhanced SpineHRNet+ model achieved mean Cobb angle prediction errors within 4° (standard deviation 3.12°) and maintained a coefficient of determination (R²) above 0.90 when tested on external datasets. It also recorded a sensitivity of 90.18% and a negative predictive value of 93.16%. As noted in the Journal of Medical Internet Research:

"The proposed data transformation approach effectively addressed data heterogeneity, significantly improving the accuracy and robustness of SpineHRNet+ in multicenter AIS assessments."

This method isn’t just accurate – it’s efficient, with a computational complexity of O(n log n), making it suitable for routine clinical workflows.

Example: Personalized Care with aiRing

AIH LLC’s aiRing highlights how continuous monitoring, combined with bias-reduction strategies, can enhance personalized care for chronic disease management. By collecting real-time health data across diverse patient environments, aiRing reduces the dependency on sporadic clinical visits, which often fail to capture daily variations in a patient’s condition.

To achieve effective personalization, the system moves beyond convenience sampling. It deliberately includes a wide range of populations – spanning different ancestries, genders, ages, and socioeconomic statuses – and uses subgroup analysis to ensure equitable performance across these groups.

For clinical teams deploying aiRing, it’s crucial to establish protocols that validate the device’s performance within their specific patient populations. This ensures that vital sign thresholds and alert mechanisms work fairly and equitably for everyone.

Conclusion

Addressing AI bias in chronic disease care requires a commitment to vigilance throughout the entire AI lifecycle – from design and development to deployment and ongoing monitoring. By doing so, healthcare providers can uphold the principles of fairness and equity, ensuring AI tools don’t reinforce the systemic inequities already present in healthcare.

Data from the FDA highlights the persistent challenges of bias in healthcare AI, underscoring the urgent need for proactive measures.

What can be done? Start by evaluating whether your AI tools’ training data accurately represents your patient population. Insist on transparency from vendors by requesting model cards that outline potential biases and limitations. Avoid using proxy variables like healthcare costs, and instead incorporate direct health indicators. This shift has proven to improve the enrollment of high-risk patients in care management programs. Keep an eye on data drift as patient demographics and clinical practices evolve. Above all, ensure human oversight remains central. The U.S. Department of Health and Human Services emphasizes:

"All relevant individuals should understand how their data is being used and how AI systems make decisions".

By diversifying data collection, conducting rigorous testing, and implementing continuous monitoring, healthcare organizations can take meaningful steps to reduce bias in AI. For those using tools like AIH LLC’s aiSpine and aiRing, these principles translate into actionable steps: validate the technology’s performance within local populations, report outcomes for different demographic groups, and actively engage with underserved communities. Equity requires tailoring resources to meet individual needs, rather than offering identical solutions to everyone, regardless of their unique challenges.

Reducing bias isn’t just a technical hurdle – it’s a moral obligation. Treating algorithmic fairness as a core standard, on par with safety and efficacy, ensures that AI can serve all patients fairly, not just those already advantaged by the system. Achieving this goal demands collaboration and accountability from clinicians, data scientists, and healthcare leaders, ensuring equity remains a priority in chronic disease care.

FAQs

How can we spot bias in an AI model before using it in patient care?

Detecting bias in an AI model before it’s deployed in healthcare is critical. To do this, start with extensive testing. Examine how the model performs across different demographic groups or social factors to uncover any disparities. This step helps identify whether the model favors or disadvantages certain groups.

Next, take a close look at the training data. Auditing the quality and diversity of the data is essential since biased data can lead to biased predictions. Implementing fairness metrics, such as demographic parity, can also provide measurable insights into potential bias.

Additionally, tools like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) can be used to interpret the model’s predictions. These tools help explain why the AI makes certain decisions, making it easier to spot unfair patterns.

Finally, continuous monitoring of the model after deployment is key. Involving a diverse group of stakeholders – like healthcare professionals, ethicists, and patient advocates – ensures the model remains fair and safe for all patient groups over time.

What fairness metrics should we track for chronic disease AI models?

When evaluating AI models for chronic disease management, fairness is a critical consideration. Two important metrics to assess fairness are demographic parity and equalized odds, which help identify how the model performs across different patient groups. These metrics ensure that no group is disproportionately favored or disadvantaged by the model’s predictions.

In addition to these metrics, conducting fairness audits during the development process is vital. These audits help uncover and address potential biases early, ensuring the model delivers equitable outcomes for all patients, regardless of their background or demographics.

What should a clinic monitor after deploying an AI tool to detect new bias?

Clinics need to keep a close eye on how the AI tool performs across different patient groups by using fairness metrics. It’s also important to review the quality of the data being used and check for any disparities in patient outcomes. By monitoring these factors regularly, clinics can spot and address biases that might arise after the tool has been deployed.