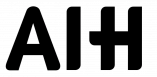

Multimodal data fusion in healthcare combines diverse data sources like imaging, wearables, genomics, and health records to improve chronic disease management. While it holds promise for personalized care and precise diagnostics, it faces major challenges:

- Data integration issues: Formats like imaging (DICOM) and wearable data differ in structure and timing, complicating alignment.

- Missing data: Gaps due to sensor errors or skipped tests reduce model accuracy (e.g., Alzheimer’s predictions drop from 90% to 72.4% with incomplete data).

- Privacy concerns: Patient re-identification risks persist despite anonymization, requiring strict compliance with regulations.

- Fusion method limitations: Early, intermediate, and late fusion techniques struggle with mismatched dimensions, high computational needs, and small sample sizes.

AI solutions, like MedFusionAI and HEALNet, use advanced models (e.g., CNNs, transformers) to address these challenges, achieving over 98% accuracy in chronic disease predictions. Wearables like aiSpine and aiRing integrate real-time data for continuous monitoring, bridging gaps in patient care.

The future of chronic disease management lies in systems that merge imaging, EHRs, and wearable data for tailored treatments, shifting care from reactive to proactive approaches.

Multimodal Data Fusion Challenges and AI Solutions in Chronic Disease Management

Building Patient Journeys from Multi-Modal Healthcare Data Using Medical Language Models

sbb-itb-44aa802

Main Challenges in Multimodal Data Integration

When it comes to managing chronic diseases, integrating multimodal data presents unique hurdles that demand attention.

Data Heterogeneity and Incompatibility

One of the biggest challenges in combining multimodal data is dealing with inconsistent formats. For example, medical imaging data (like DICOM or NifTi), genomic arrays, and wearable devices (such as aiSpine or aiRing) all operate on different scales and come with varying levels of noise. This makes seamless integration a tough task.

Another issue is the imbalance between data types. High-dimensional genomic data often overshadows simpler clinical measurements, skewing AI models toward the more complex data. A review of 69 studies on multimodal data integration revealed that while combinations like imaging with clinical data (22 studies) and imaging with genomics (19 studies) are common, aligning these formats remains a persistent struggle.

Timing is another factor. Wearable devices like aiRing collect real-time, high-frequency data – recording heart rate every second – while imaging methods, such as CT scans, happen sporadically. This mismatch in temporal patterns makes it tricky to align datasets without sacrificing important time-based insights.

Missing or Incomplete Data

Missing data is an inevitable challenge in chronic disease management. Gaps can result from sensor malfunctions, patients skipping expensive tests (like PET scans), or non-compliance, all of which can weaken the accuracy of fusion models.

The impact of missing data is striking. For instance, an Alzheimer’s study using the ADNI dataset showed a predictive accuracy of 90% when all modalities were available. However, this dropped to 72.4% when incomplete data was used. Similarly, in mental health research, 76% of studies faced limitations due to small sample sizes (fewer than 100 participants), which only worsened the effects of missing information. Closing these data gaps is essential for improving the reliability of multimodal integration.

Privacy and Security Concerns

Integrating multimodal data raises serious privacy risks, particularly around patient re-identification. As highlighted in Frontiers in Artificial Intelligence:

"While data anonymization techniques help protect patient privacy, the risk of re-identification remains, particularly when datasets are cross-referenced".

Compliance with regulations like HIPAA, GDPR, and PIPEDA adds another layer of complexity. Continuous monitoring systems, such as aiSpine and aiRing, generate enormous amounts of personal health data, requiring strong security protocols to safeguard real-time clinical decisions. Ensuring this level of security is critical to maintaining patient trust in long-term monitoring systems.

Addressing these challenges is a key step toward building more effective AI-driven solutions for chronic disease management.

Limitations of Current Fusion Methods

Combining multimodal data for chronic disease management often relies on early fusion, intermediate fusion, and late fusion techniques. However, each approach comes with its own set of challenges, particularly when integrating sensor, imaging, and genomic data.

Early, Intermediate, and Late Fusion Techniques

Early fusion integrates raw or feature-level data but struggles with mismatched dimensions and sampling rates. As Maryam Farhadizadeh from the University of Freiburg points out:

"Early fusion may be insufficient when modalities differ in dimensionality, sampling rates, or semantic content, such as combining image data with structured omics data, where aligning and weighting heterogeneous features is non-trivial".

This method assumes all data types are equally informative and compatible, which is rarely the case.

Intermediate fusion processes each data type through separate neural network branches before merging them at hidden layers. While this approach is more adaptable than early fusion, it demands significant computational resources and careful balancing of different data signals. A review of 69 studies found intermediate fusion to be the most common approach (used in 24 studies), likely because it better handles dimensionality imbalances compared to early fusion.

Late fusion involves training separate models for each data type and combining their predictions at the decision-making stage. While effective for managing very different modalities, it falls short when modalities are closely related. Farhadizadeh explains:

"Late fusion… may be less effective for modalities with strong correlations, as it does not capture interactions between different data sources".

For instance, when sensor data from devices like aiRing closely correlates with imaging results, late fusion fails to account for these interconnections, limiting its diagnostic potential. These limitations highlight broader challenges like small sample sizes and high-dimensional data.

Small Sample Sizes and High Dimensionality

Beyond fusion techniques, issues like limited data availability and high-dimensional datasets further complicate model performance. Chronic disease research often faces the "small n, large p" problem – where small patient populations are paired with highly complex datasets. This is particularly evident in rare cancers or specific neurological conditions, where small sample sizes can lead to overfitting. Instead of learning general patterns, models memorize the training data, reducing their effectiveness on new datasets.

For example, Bhagwat et al. applied a Longitudinal Siamese Neural Network to ADNI data, achieving 0.900 accuracy. However, this dropped to 0.724 when tested on independent data, underscoring the overfitting challenge in chronic disease monitoring.

High-dimensional data, such as information from genomics or medical imaging, adds another layer of difficulty, particularly for real-time clinical use. While dimensionality reduction techniques like PCA or feature selection are often employed to simplify datasets, they risk discarding critical signals necessary for accurate diagnosis. The problem worsens when datasets lack entire modalities – for example, patients with electronic health records but no matching genomic or imaging data – further shrinking the practical sample size for multimodal models.

AI Solutions for Data Fusion

To tackle the challenges of integrating diverse data sources, AI employs advanced deep learning models designed for specific types of data.

Deep Learning Models for Data Fusion

Modern AI frameworks use specialized encoders to handle various data modalities. For instance, Convolutional Neural Networks (CNNs) analyze medical imaging, Long Short-Term Memory (LSTM) networks process sequential data from wearables, and Multi-Layer Perceptrons (MLPs) or Graph Convolutional Networks (GCNs) manage structured genomic or electronic health record (EHR) data.

In May 2025, a research team led by M. Chandra Sekhar at Presidency University introduced MedFusionAI, a deep learning framework designed to combine these specialized encoders. MedFusionAI achieved an impressive 98.76% accuracy in predicting chronic disease risks by employing attention mechanisms like transformers and cross-modal attention layers. These tools dynamically assessed the importance of different data types and unraveled complex relationships between them.

Another notable innovation came with the MODES framework, highlighted in early 2025 in npj Digital Medicine. This system separated shared and modality-specific information while working with data from the UK Biobank. By integrating ECG and cardiac MRI data, MODES accurately predicted cardiovascular phenotypes. Remarkably, it could infer missing MRI phenotypes using only ECG data, improving diagnostic precision.

Generative Adversarial Networks (GANs) and latent optimization techniques also play a key role in addressing missing data. For example, in October 2024, researchers unveiled HEALNet (Hybrid Early-fusion Attention Learning Network). Tested on four cancer datasets from The Cancer Genome Atlas (TCGA), HEALNet successfully integrated high-resolution histopathology images with multi-omic genomic data and maintained performance even when some data modalities were unavailable.

These advancements in deep learning continue to elevate predictive accuracy in chronic disease management.

Improving Predictive Accuracy for Chronic Diseases

Intermediate fusion techniques have proven effective in improving predictions by first learning modality-specific representations and then combining them into joint latent spaces. This method is particularly robust against challenges like small sample sizes and missing data.

In November 2025, researchers developed CURENet, a multimodal model that utilized large language models (LLMs) and transformer encoders to integrate unstructured clinical notes with time-series visit data. When tested on the MIMIC-III and FEMH datasets, CURENet achieved over 94% accuracy in predicting the top 10 chronic conditions. Cong-Tinh Dao and the CURENet Research Team emphasized:

"most predictive models fail to fully capture the interactions, redundancies, and temporal patterns across multiple data modalities"

.

Graph-based integration has also emerged as a transformative approach. In September 2025, the HGDC-Fuse framework introduced a patient-centric, multi-modal heterogeneous graph to handle asynchronous and incomplete data from the MIMIC-IV and MIMIC-CXR datasets. By leveraging disease correlation-guided attention layers, the system resolved inconsistencies between data types and enhanced multi-disease prediction.

A study involving 33 cancer types further demonstrated the effectiveness of multimodal models. Late fusion approaches, which combine data at a later stage in the analysis, outperformed single-modality models in 76% of cases. According to the AstraZeneca Oncology Data Science Team:

"late fusion models consistently outperformed single-modality approaches in TCGA lung, breast, and pan-cancer datasets, offering higher accuracy and robustness"

.

These AI-driven methods show how integrating diverse data sources with advanced architectures can overcome issues like data heterogeneity, missing values, and alignment challenges, leading to significantly improved outcomes.

How AIH Wearables Address Data Fusion Challenges

AIH LLC leverages its AI-powered RTM platform to seamlessly integrate data from wearable devices. The aiSpine device tracks and records angular and curvature changes in the neck and back, providing real-time musculoskeletal insights. Meanwhile, the aiRing uses advanced sensors to continuously monitor vital signs and other physiological parameters.

Integration of aiSpine and aiRing Data

The AIH Health App acts as the central hub for integrating data from wearables, imaging, and genomics. It employs an intermediate fusion strategy that maps diverse inputs into a shared semantic space. This approach resolves the fragmentation often caused by unimodal data, enabling more nuanced diagnostic insights.

AI-powered algorithms autonomously process and monitor health data, identifying cross-domain patterns, such as links between posture changes and vital sign fluctuations. Studies reveal that multimodal AI models outperform single-source systems by 6%–33%.

These techniques lay the groundwork for continuous management of chronic diseases.

Real-Time Chronic Disease Monitoring

AIH’s RTM services are designed to monitor musculoskeletal health, respiratory status, therapy adherence, and treatment responses, ensuring effective management of chronic conditions. The system uses attention mechanisms to highlight relevant evidence even when some data points are missing or delayed. Research indicates that secure multimodal data fusion frameworks can improve diagnostic accuracy by 9.8% and reduce client dropout rates by 54%.

The AIH Health App transforms raw data into actionable insights by identifying meaningful patterns, comparing metrics to personal baselines, and suggesting targeted interventions. Specialized AI agents analyze specific data types, applying domain expertise rather than relying solely on general algorithms.

Future Development of AIH Technologies

AIH LLC’s platform is set to grow alongside advancements in Generalist Medical AI (GMAI) and Sensory AI. Its architecture supports new modalities, such as vocal biomarkers and advanced imaging, which could lead to the creation of "Human Digital Twins" for non-invasive monitoring of mental and respiratory health. As Yan Hao et al. noted in the Journal of Medical Internet Research:

"The integration of these diverse data sources enables a more nuanced and comprehensive understanding of patient health".

The platform is designed for ongoing refinement, allowing its AI models to improve as more multimodal data becomes available. This evolution paves the way for large-scale frameworks that enhance accuracy and expand chronic disease applications. With healthcare increasingly moving toward integrated systems that combine imaging, electronic health records, and wearable IoT data, AIH LLC’s expertise in multimodal data fusion positions it to deliver even more advanced solutions for chronic disease management.

Conclusion

Key Takeaways

Managing chronic diseases effectively requires pulling together data from sensors, imaging, and genomic sources. This process is challenging due to issues like inconsistent data formats, missing information, uneven data dimensions, and privacy concerns. Traditional methods often fall short, leading to fragmented insights that can overwhelm clinicians.

Deep learning has stepped in to address these challenges. By using tools like modality-specific encoders, attention mechanisms, and generative models, these advanced techniques create unified data representations. This approach has been shown to improve diagnostic accuracy and patient outcomes. Research highlights that multimodal AI models consistently outperform single-modality approaches, achieving over 90% accuracy in diseases like Alzheimer’s and COVID-19. As Ruiying Zhang explained in Frontiers in Medicine:

"Multimodal AI technologies are transforming medical practices by integrating diverse data sources to enable more accurate diagnosis, disease prediction, and treatment planning".

These advancements are paving the way for the next generation of chronic care solutions.

The Future of Chronic Disease Management

The rise of real-time, continuous monitoring through wearable devices is reshaping how chronic diseases are managed. AIH LLC’s system – which integrates the aiSpine, aiRing, and AIH Health App – offers a glimpse into this future. By using intermediate fusion, it addresses data fragmentation while ensuring functionality even when some data inputs are temporarily unavailable.

Looking ahead, advancements in AI and data fusion are set to transform real-time patient monitoring. The healthcare industry is moving toward systems that seamlessly merge imaging, electronic health records, and wearable IoT data. This shift promises more than just better diagnostics – it opens the door to personalized treatment plans tailored to each patient’s unique health journey. The focus is shifting from reacting to problems as they arise to proactively managing chronic conditions.

FAQs

How do you align wearable data with imaging and EHR timelines?

Aligning data from wearable devices with imaging and electronic health record (EHR) timelines means ensuring everything lines up in terms of timing and relevance. This process involves syncing timestamps across different devices, using standardized formats like FHIR (Fast Healthcare Interoperability Resources) for consistent data mapping, and applying techniques to combine data from multiple sources effectively.

To tackle issues like latency differences between systems, advanced algorithms come into play. These algorithms help merge data streams seamlessly, offering a more complete view of a person’s health. With these measures in place, healthcare providers can enable real-time monitoring and make more informed, proactive decisions – especially when managing chronic conditions.

What’s the best way to handle missing modalities without losing accuracy?

When dealing with missing modalities in multimodal data fusion for chronic disease management, it’s crucial to use models that can handle incomplete data effectively. Approaches such as transformer-based architectures with masked self-attention or methods that separate shared and modality-specific features offer flexibility by dynamically adjusting to missing inputs. These techniques help to minimize bias, eliminate the need for imputation, and ensure reliable data integration. This way, accuracy is preserved even when certain types of data are unavailable.

How can multimodal fusion stay HIPAA-compliant and reduce re-identification risk?

To maintain HIPAA compliance and reduce the risk of re-identifying individuals in multimodal data fusion, it’s essential to apply privacy-focused techniques. For instance, differential privacy helps safeguard sensitive information by adding noise to the data, making it harder to trace back to individuals.

Another effective approach is federated learning, which processes data locally and shares only model updates instead of raw data. This means sensitive information never leaves the original source, reducing exposure risks.

On top of that, using secure communication protocols, encryption, and strict access controls adds extra layers of protection. When these steps are paired with strong data governance practices, sensitive health information remains secure – especially critical in managing chronic diseases.