Starting February 2, 2026, the FDA replaced the Quality System Regulation (QSR) with the Quality Management System Regulation (QMSR) to align with ISO 13485:2016 standards. This shift emphasizes a risk-based approach to managing medical device quality, introducing challenges for devices powered by artificial intelligence (AI) and machine learning (ML). Unlike static devices, AI/ML-enabled devices evolve over time, requiring ongoing oversight for risks like data bias, model drift, and performance monitoring.

Key points:

- Traditional Devices: Fixed performance; risks include hardware failures, calibration errors, and design flaws. Compliance involves design controls, audits, and ISO 14971-based risk management.

- AI/ML Devices: Dynamic behavior; risks stem from data quality, algorithm changes, and cybersecurity. Compliance now includes treating datasets as controlled documents, statistical validation, and post-market monitoring.

- New FDA Requirements: Predetermined Change Control Plans (PCCPs) allow pre-approved updates for AI systems, reducing the need for new submissions.

AI/ML devices like wearables face added complexities due to variability in usage and reliance on cloud services. While offering flexibility, they demand stricter quality controls and continuous risk management to ensure patient safety.

1. Traditional Medical Devices

Risk Types

Traditional medical devices come with a variety of risks that span their entire lifecycle. These include hardware failures, software malfunctions in locked code, physical harm to patients, sterilization failures, contamination, equipment calibration errors, design flaws, and material defects. A lack of traceability from raw materials to the final product can further amplify these risks .

When the U.S. Quality System Regulation was first introduced in 1978 and later revised in 1996, the term "risk" appeared only once, specifically in the context of design planning. This omission left significant gaps in addressing newer challenges such as cybersecurity and usability engineering. These risks highlight the importance of having strong compliance measures in place.

QSR Compliance Challenges

As risks evolve, so do compliance requirements. The transition to the Quality Management System Regulation (QMSR) on February 2, 2026, marked a significant shift. The FDA replaced the Quality System Inspection Technique (QSIT) with a risk-based inspection playbook (CP 7382.850). This new approach requires investigators to evaluate risk signals based on a firm’s own risk management documentation.

Under QMSR, manufacturers must now adhere to stricter guidelines, including:

- Creating a formal Quality Manual and Medical Device File that align with ISO 13485 standards.

- Conducting internal audits and management reviews, which are now subject to FDA inspection .

- Validating all quality system software, such as those used for complaint tracking and document control.

These changes demand more meticulous planning and documentation from manufacturers to ensure compliance.

Risk Mitigation Strategies

To manage risks effectively, manufacturers need a comprehensive, lifecycle-focused risk management approach. Leveraging ISO 14971, they can identify hazards and implement mitigation strategies at every stage of the device lifecycle . This involves:

- Implementing design controls with well-defined inputs and outputs.

- Conducting verification and validation to confirm devices meet user needs .

- Differentiating Corrective Actions (addressing existing issues like complaints or audit findings) from Preventive Actions (focusing on potential issues identified through trend analysis or risk assessments) .

Additional steps include:

- Enforcing risk-based supplier oversight.

- Validating critical processes, such as sterilization.

- Performing regular management reviews to address quality system gaps .

While this structured approach works well for traditional devices, the introduction of AI/ML systems brings new layers of complexity to risk management.

sbb-itb-44aa802

2. AI/ML-Enabled Medical Devices

Risk Types

AI-driven medical devices face unique challenges that traditional devices don’t encounter. A significant issue is their reliance on data quality. If the training data is biased or doesn’t represent the target population, it can lead to unsafe outcomes for certain groups of patients .

Another concern is model drift. Over time, AI systems can lose accuracy as incoming data changes or as the relationship between inputs and outputs evolves. This drift can compromise safety, and the complexity of deep neural networks makes clinical validation even more difficult . Additionally, AI models often struggle to generalize, performing well in one environment but poorly in another due to differences in patient demographics or the equipment used. They’re also susceptible to adversarial inputs, where small tweaks to data can cause the system to produce incorrect results.

QSR Compliance Challenges

The shift to the Quality Management System Regulation (QMSR) in February 2026 brought new compliance challenges for AI devices, extending beyond the traditional software validation frameworks. Traditional Quality System Regulation (QSR) guidelines assumed devices were "locked" after approval, but AI systems that learn and adapt over time challenge this notion .

Under QMSR, datasets are now treated as controlled documents within the Quality Management System. This means manufacturers must implement version control, standardized labeling, and traceability to ensure every data version is linked to specific model iterations. Design controls now include requirements for data and algorithm specifications as part of the design inputs, while design outputs must document trained model weights and validation results.

Verification and validation have also evolved. Instead of simply testing the logic of the code, AI validation now requires statistical performance evaluations using independent datasets that weren’t involved in model training . By early 2026, the FDA had authorized over 1,000 AI/ML-enabled medical devices. However, a 2025 study found that only 28% of these devices had public summaries documenting premarket safety assessments.

Risk Mitigation Strategies

To address AI-specific risks, manufacturers can use Predetermined Change Control Plans (PCCPs). These plans allow for pre-approved updates – like retraining models with new data – without requiring a new 510(k) submission for every change .

Adopting Good Machine Learning Practice (GMLP) is crucial for ensuring proper data management, model training, and reproducibility . Implementing bias analysis frameworks helps identify and address performance disparities across different demographic groups.

Continuous post-market monitoring is another key strategy. AI systems must be equipped to detect performance drift in real time and initiate corrective actions when thresholds are exceeded . Given past recalls tied to algorithmic errors, rigorous surveillance after market release is non-negotiable.

These strategies are especially important when dealing with the added complexities of AI-enabled wearables.

Wearable Device Implications

Wearables bring additional challenges on top of the risks associated with AI. Unlike traditional devices, wearables operate in dynamic environments, requiring them to adapt to constantly changing data conditions. Factors like user movement, environmental variability, and inconsistent usage patterns can affect data quality. The push for continuous monitoring demands Quality Management System strategies capable of handling these variables.

Many AI-powered wearable functions fall under the Software as a Medical Device (SaMD) classification, with their risk levels categorized by the IMDRF framework (Categories I–IV). For example, in September 2018, Apple Inc. received De Novo authorization for the Apple Watch ECG app, making it the first consumer wearable with an FDA-authorized ECG feature. This set a regulatory benchmark for diagnostic wearables powered by AI.

Since wearable devices often rely on cloud services for data processing, cybersecurity becomes a critical focus. This includes using encryption, secure software update systems, and comprehensive incident response plans. The combination of AI algorithms, wireless connectivity, and consumer-grade hardware creates a complex risk profile that needs careful management throughout the device’s lifecycle.

AIH LLC has integrated these strategies into its aiSpine posture monitor and aiRing vital signs ring, ensuring reliable performance and prioritizing patient safety.

From QSR to QMSR FDA’s Quality Revolution For Medical Devices

Advantages and Disadvantages

Traditional vs AI/ML Medical Devices: Key Differences in Risk Management and Compliance

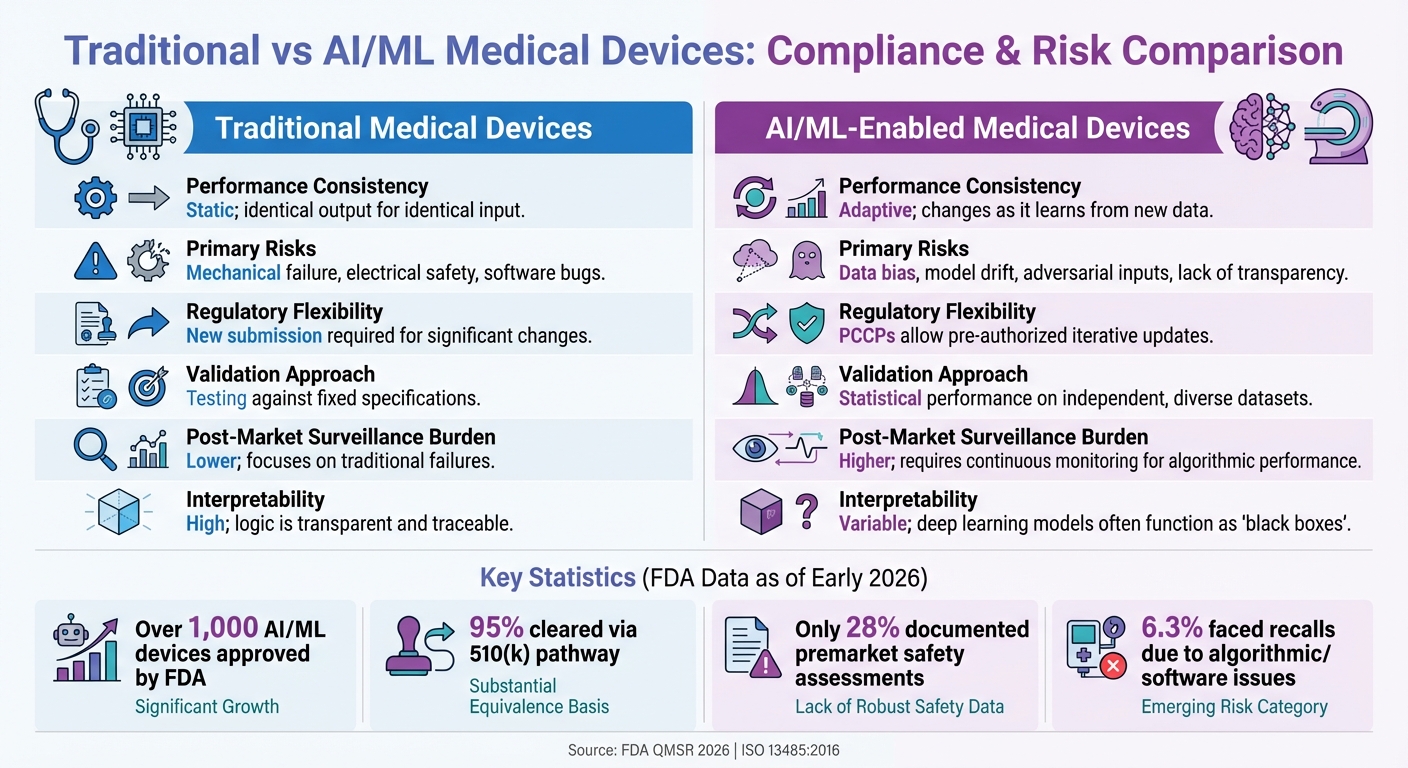

Traditional and AI/ML-enabled devices each bring their own set of strengths and challenges when it comes to regulatory compliance and ensuring patient safety.

Traditional devices are known for their predictable performance. Once validated, they function consistently and reliably, with risks largely tied to mechanical failures, electrical issues, or software bugs. These are well-documented and can be effectively managed through standard ISO 14971 risk management practices. When updates or modifications are necessary, the process is straightforward: significant changes require a new 510(k) submission.

AI/ML-enabled devices, on the other hand, stand out for their adaptive nature. They can improve over time by retraining on new data, which can enhance diagnostic accuracy or patient outcomes without needing entirely new regulatory filings. Predetermined Change Control Plans (PCCPs) allow manufacturers to make pre-authorized updates, offering a degree of flexibility that traditional devices cannot match. As of early 2026, the FDA has approved over 1,000 AI/ML-enabled devices, with 95% cleared via the 510(k) pathway. However, despite this progress, many manufacturers have yet to fully leverage these regulatory advancements.

The challenges with AI systems lie in their complexity. As highlighted in QMSR compliance discussions, their performance is heavily reliant on the quality of training data, making them susceptible to issues like bias and drift. A study of 691 FDA-cleared AI devices found that only 28% documented premarket safety assessments, and about 6.3% of these devices have faced recalls due to algorithmic or software-related issues. Additionally, deep learning models often function as "black boxes", making their decision-making processes difficult to interpret compared to traditional rule-based systems. AI devices also require ongoing post-market monitoring to identify potential performance issues, adding a layer of operational complexity.

Here’s a quick comparison of the two device types:

| Aspect | Traditional Medical Devices | AI/ML-Enabled Medical Devices |

|---|---|---|

| Performance Consistency | Static; identical output for identical input | Adaptive; changes as it learns from new data |

| Primary Risks | Mechanical failure, electrical safety, software bugs | Data bias, model drift, adversarial inputs, lack of transparency |

| Regulatory Flexibility | New submission required for significant changes | PCCPs allow pre-authorized iterative updates |

| Validation Approach | Testing against fixed specifications | Statistical performance on independent, diverse datasets |

| Post-Market Surveillance Burden | Lower; focuses on traditional failures | Higher; requires continuous monitoring for algorithmic performance |

| Interpretability | High; logic is transparent and traceable | Variable; deep learning models often function as "black boxes" |

For wearable devices like AIH LLC’s aiSpine and aiRing, the decision isn’t a simple one. These products combine AI’s adaptability with rigorous data governance, bias analysis, and continuous monitoring to address AI-specific risks. This approach strikes a balance between innovation and the reliability that patients expect from medical devices. These comparisons highlight how each type of device meets different needs, helping manufacturers navigate the trade-offs effectively.

Conclusion

The shift from traditional devices to those powered by AI and machine learning (AI/ML) demands a fresh approach to quality management and risk control. Unlike traditional devices, which rely on predictable risks and maintain consistent performance after validation, AI/ML devices introduce complexities like bias, model drift, and opacity. These challenges require manufacturers to treat datasets as critical, controlled components of the device itself.

To address these challenges, the Quality Management System Regulation (QMSR), set to take effect on February 2, 2026, introduces measures tailored to adaptive systems. This transition aligns more closely with ISO 13485:2016, offering a regulatory framework that recognizes the dynamic nature of AI systems. A key feature of this framework is the Predetermined Change Control Plan (PCCP), which allows manufacturers to pre-approve model updates without needing to file a new 510(k) submission for every retraining cycle. However, this flexibility comes with heightened responsibility. As MedDeviceGuide aptly explains:

The PCCP framework does not eliminate regulatory oversight – it requires early, rigorous oversight.

Effectively managing the unique risks of AI/ML devices means embedding quality controls throughout the entire device lifecycle – from design to post-market surveillance. This includes formalizing data governance, conducting regular bias audits across diverse demographic groups, and adopting automated validation processes to keep up with frequent model updates. The current lack of comprehensive premarket safety documentation for FDA-cleared AI devices highlights just how much work still needs to be done.

For companies like AIH LLC, which develops AI-powered wearables such as aiSpine and aiRing, success hinges on blending adaptive features with strict risk management practices. This involves implementing version control, roll-back plans, and ongoing post-market monitoring to detect and address performance drift. The challenge is to merge the reliability of traditional systems with the adaptability of AI while ensuring patient safety at every step.

On a global scale, efforts to harmonize regulations further emphasize the importance of robust AI quality systems. Manufacturers who prioritize strong quality management frameworks, maintain transparent documentation, and commit to continuous monitoring will be better equipped to adapt to these changes. By integrating traditional risk management practices with adaptive controls, the industry can pave the way for innovation while ensuring improved outcomes for patients.

FAQs

What is the biggest QMSR change for AI/ML medical devices?

The most notable update in the Quality Management System Regulation (QMSR) for AI/ML medical devices is the incorporation of risk management throughout the entire quality system. This update brings the regulation in line with ISO 13485:2016. The focus is now on a risk-based approach that spans the entire product lifecycle – covering management, design, and manufacturing. Manufacturers are expected to actively identify and address risks tied to AI/ML components, ensuring these devices remain safe and effective.

How do you control and trace training datasets in a QMS?

Controlling and tracing training datasets within a Quality Management System (QMS) means keeping detailed records of processes like data collection, validation, and version control. These steps are crucial for maintaining data integrity, ensuring reproducibility, and meeting regulatory requirements. Thorough documentation not only promotes transparency but also aligns with the standards expected for AI/ML model training in the development of medical devices.

What should a PCCP include for model updates and retraining?

A PCCP should clearly outline the process for model updates and retraining. This includes specifying how changes will be implemented and verified. Additionally, it must evaluate how these updates could affect the device’s safety and performance. Proper documentation is essential to ensure compliance and uphold performance standards.