The FDA is reshaping how AI-enabled medical devices are managed. On January 7, 2025, the FDA issued draft guidance introducing a Total Product Life Cycle (TPLC) framework. This approach ensures ongoing oversight of AI devices from development to decommissioning. With over 1,000 AI-enabled devices already authorized, the guidance addresses critical challenges like data drift, AI bias, and cybersecurity risks.

Key takeaways:

- Data integrity is essential: AI models are part of the device’s mechanism, meaning data quality directly impacts safety.

- New tools for manufacturers: Predetermined Change Control Plans (PCCPs) simplify AI updates, while Good Machine Learning Practices (GMLP) ensure consistent quality.

- Stronger transparency: Requirements like "Model Cards" make AI decisions clearer and more understandable.

This guidance helps manufacturers meet regulatory standards while improving safety and performance across AI-powered devices.

FDA Guidance on Artificial Intelligence (AI) in Medical Devices

FDA AI Guidance for Medical Devices: What You Need to Know

On January 7, 2025, the FDA released draft guidance outlining its first unified framework for the design, development, and maintenance of AI-enabled medical devices. This new approach moves away from one-time approvals and introduces a Total Product Life Cycle (TPLC) model. Under this model, the FDA will oversee devices from their initial development stages through deployment, maintenance, and eventual decommissioning.

Troy Tazbaz, Director of the Digital Health Center of Excellence at the FDA, highlighted the importance of this milestone:

"The FDA has authorized more than 1,000 AI-enabled devices through established premarket pathways. As we continue to see ongoing developments in this field, it’s important to recognize that there are specific considerations unique to AI-enabled devices."

In line with this, the guidance updates marketing submission requirements to address the unique challenges posed by AI technologies. Submissions across pathways like 510(k), De Novo, and PMA must now include seven key documentation areas: device description (clearly identifying AI use), risk assessment, data management, model development details, performance validation, cybersecurity measures, and postmarket monitoring plans. A critical point in the guidance is that for AI devices, the model itself is considered part of the mechanism of action. This means that the quality of data used in these models directly impacts patient safety, requiring robust documentation of AI models and data management practices. These requirements emphasize the importance of maintaining data integrity throughout the device’s lifecycle and lay the groundwork for the guidance’s primary focus areas.

Main Focus Areas in the FDA Guidance

The FDA’s guidance focuses on three main areas that manufacturers need to address:

- Transparency and Explainability: Manufacturers are now required to use "Model Cards" to provide standardized summaries of an AI model’s training and performance characteristics. The FDA defines transparency as making information "both accessible and functionally comprehensible", which ties into both how information is shared and how the device is used.

- Bias Management: To ensure safety and effectiveness for all intended populations, manufacturers must evaluate device performance across diverse demographic groups, including race, ethnicity, sex, and age.

- Predetermined Change Control Plans (PCCPs): This new concept allows manufacturers to pre-authorize future modifications to AI models without needing fresh marketing submissions for every update.

The guidance also encourages manufacturers to engage with the FDA early in the process through the Q-Submission Program to discuss novel AI technologies or validation methods before formal submission. Additionally, aligning development processes with established standards like ISO 14971 for risk management is strongly recommended to promote consistency across submissions.

Data Integrity Challenges in AI Medical Devices

FDA AI Medical Device Data Integrity Challenges and Solutions

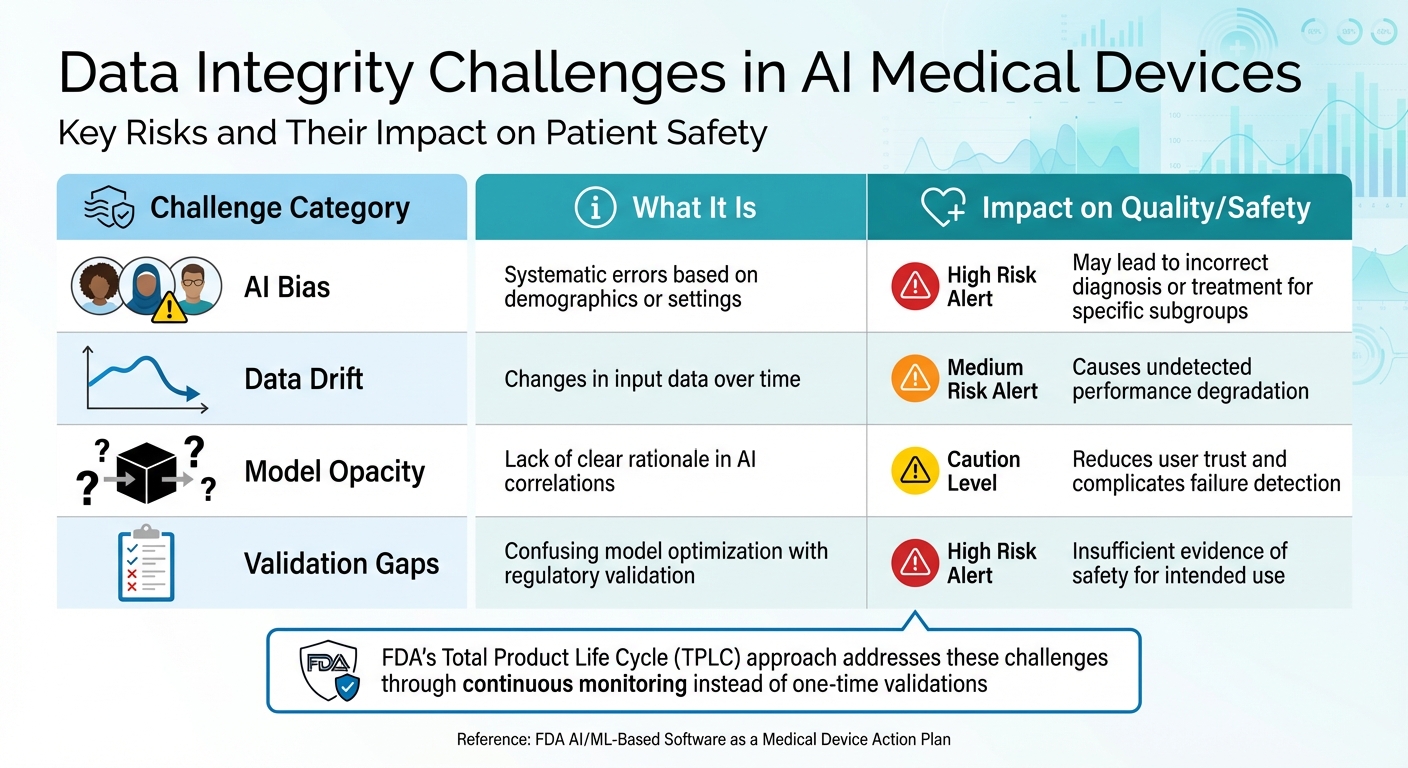

In line with the FDA’s Total Product Life Cycle model, tackling data integrity challenges during both the design and deployment phases is critical. The FDA’s guidance sheds light on several unique challenges tied to AI systems, which often rely on intricate data patterns without clear biological or mechanical explanations. For manufacturers aiming to meet regulatory standards, understanding and addressing these risks is a must. Below, we break down key challenges related to training datasets, data source transparency, and performance metric validation.

Data Quality and Bias in Training Datasets

One of the biggest hurdles in maintaining data integrity is ensuring the quality and fairness of training datasets. AI bias stands out as a major concern. The FDA defines bias as:

"a potential tendency to produce incorrect results in a systematic, but sometimes unforeseeable way, which can impact safety and effectiveness of the device within all or a subset of the intended use population".

When training datasets fail to represent a diverse patient population, the AI may struggle to provide accurate diagnoses or treatment recommendations for certain groups. For example, a model trained predominantly on data from one demographic might overlook critical patterns in another, potentially leading to delayed or incorrect clinical decisions. On top of this, overfitting – where the model becomes too tailored to the training data – can further compromise its real-world performance.

Transparency and Validation of Data Sources

Transparency is another pressing issue. Many AI models function as "black boxes", making decisions based on complex data correlations that are difficult for users to interpret. This lack of clarity can make it harder for healthcare providers to understand or trust the AI’s outputs when making clinical decisions. According to the FDA, transparency means not only making information accessible but also ensuring it is understandable and usable for end users.

It’s also important to distinguish between "validation" and "model tuning." Per FDA regulations (21 CFR 820.3(z)), validation involves providing objective evidence that a device consistently fulfills its intended purpose. The FDA cautions against using "validation" to describe the training or tuning process in medical device submissions. This confusion can lead to inadequate evidence of a device’s safety and effectiveness. Proper validation requires selecting reliable reference standards and managing variability among clinicians involved in grading.

Performance Metric Validation Difficulties

Validating performance metrics presents its own set of challenges. AI models are particularly vulnerable to data drift – subtle changes in input data over time that may go unnoticed. Factors like updated imaging protocols, new electronic health record systems, or changes in clinical workflows can alter input data, potentially degrading performance without users realizing it.

Another issue is the gap between controlled testing environments and real-world clinical settings. Benchmarks established during testing may not hold up when patient demographics shift, clinical practices evolve, or healthcare infrastructures change. Post-deployment, maintaining data integrity becomes even more complex due to challenges like incomplete data, inconsistent quality, and interoperability issues across different electronic health record systems and device logs.

| Challenge Category | What It Is | Impact on Quality/Safety |

|---|---|---|

| AI Bias | Systematic errors based on demographics or settings | May lead to incorrect diagnosis or treatment for specific subgroups |

| Data Drift | Changes in input data over time | Causes undetected performance degradation |

| Model Opacity | Lack of clear rationale in AI correlations | Reduces user trust and complicates failure detection |

| Validation Gaps | Confusing model optimization with regulatory validation | Insufficient evidence of safety for intended use |

These challenges underscore why the FDA is adopting the Total Product Life Cycle (TPLC) approach, which focuses on ongoing oversight and continuous performance monitoring instead of relying solely on one-time validations. This shift also sets the stage for strategies like Predetermined Change Control Plans (PCCPs) and Good Machine Learning Practices (GMLP), which will be discussed in the next sections.

sbb-itb-44aa802

How to Address Data Integrity Challenges

The FDA guidance highlights the importance of maintaining strong data integrity in AI systems. To help manage risks throughout the lifecycle of AI-enabled devices, the FDA suggests two key strategies: Predetermined Change Control Plans (PCCPs) and Good Machine Learning Practices (GMLP).

Predetermined Change Control Plans (PCCPs)

PCCPs provide a structured approach to handle updates to AI-powered device software without requiring a new marketing application for every change. According to Troy Tazbaz, PCCPs must be focused, risk-based, and evidence-based, ensuring transparency and incorporating total product lifecycle management.

A complete PCCP includes three essential components:

- Modification Description: Explains what changes will be made, whether manual or automatic, and how frequently they will occur.

- Modification Protocol: Details how data will be sourced and ensures test data remains strictly independent, accurately representing the intended population.

- Impact Assessment: Compares the original and updated versions of the device to assess risks, such as unintended bias.

PCCPs are applicable to submissions like 510(k) (Traditional and Abbreviated), De Novo, and PMA, though they are currently excluded from the Special 510(k) pathway. Manufacturers can leverage the FDA’s Q-Submission Program to get early feedback on their proposed changes, potentially saving time and resources.

To ensure reliable performance evaluations, the test data used must remain independent and unbiased. Additionally, the Modification Protocol should include guardrails – mechanisms designed to detect, halt, or reverse any changes that fail to meet predefined performance standards.

Good Machine Learning Practices (GMLP)

The International Medical Device Regulators Forum (IMDRF), in collaboration with the FDA, Health Canada, and the UK’s MHRA, has outlined 10 guiding principles for GMLP. These principles serve as a roadmap for creating safe and reliable AI systems, emphasizing the need for diverse clinical and technical expertise to uphold data integrity.

Data quality assurance is a cornerstone of GMLP. It calls for robust software engineering and security measures. As the guidance explains, data collection protocols must ensure adequate representation of factors like age, sex, race, and ethnicity to allow results to be generalized to the intended population. This helps mitigate bias, a common challenge in AI systems.

GMLP also stresses the importance of using independent test sets to validate performance, echoing the recommendations for PCCPs. For AI systems that continue learning post-deployment, the guidance highlights the need for controls to address risks like overfitting, bias, or model degradation caused by dataset drift. These practices align with the FDA’s Total Product Life Cycle model, which emphasizes ongoing oversight and performance consistency.

Postmarket monitoring plays a critical role as well. After deployment, AI models must be continuously observed for real-world performance. Changes in clinical workflows, imaging equipment, or electronic health record systems can lead to dataset drift, silently impacting AI effectiveness over time.

The FDA underscores that adherence to GMLP principles "can help to support the quality of such devices". By integrating these practices from the start, manufacturers can ensure data integrity is a foundational element of their AI systems. Together, PCCPs and GMLP create a robust framework for developing safe and effective AI-enabled medical devices.

Data Integrity and Quality System Regulations (QSR)

Data integrity plays a key role in the FDA’s Quality System Regulation (21 CFR Part 820). For AI-enabled medical devices, it provides the evidence needed to show that a device consistently meets its intended purpose throughout its lifecycle.

The FDA’s QSR requires manufacturers to validate device design under Design Controls (21 CFR 820.30) using strong data management practices and independent testing. It also addresses challenges like performance degradation or data drift through Nonconforming Products (21 CFR 820.90) and CAPA systems (21 CFR 820.100).

A solid Data Management Plan is critical for meeting QSR requirements throughout the Total Product Life Cycle (TPLC). This plan must cover data sources, curation, annotation, storage, and security. According to the FDA (21 CFR 820.3(z)), "validation" means providing objective evidence that a device consistently performs as intended – this is distinct from fine-tuning AI models.

Manufacturers are also expected to follow ISO 14971 and AAMI CR34971 standards to identify AI-specific risks like data poisoning, model evasion, and performance drift. Cybersecurity measures are vital to safeguard training data and inputs from tampering or unauthorized access. Additionally, performance monitoring plans required under QSR help manage risks associated with data drift in real-world use. These regulations form the basis for crafting data integrity strategies tailored to AIH LLC’s products.

Data Integrity Strategies for AIH LLC Products

For AIH LLC’s aiSpine posture monitoring device and aiRing vital signs monitoring ring, maintaining data integrity under QSR is essential. Both devices rely on continuous sensor data collection, so their Data Management Plans must outline how data is collected, validated, and stored to meet FDA standards.

For aiSpine, protocols must ensure accurate posture measurements across a wide range of body types, ages, and usage scenarios. Training datasets should reflect the U.S. population, accounting for factors like age, sex, race, and ethnicity to minimize algorithmic bias in posture correction.

With aiRing’s vital signs monitoring, data integrity strategies must address its use in diverse scenarios and its waterproof design. Validation should confirm that the AI algorithms accurately track metrics like heart rate and oxygen saturation under different conditions and activities. Independent test datasets – separate from the training data – should verify these performance metrics.

Both devices should also have performance monitoring plans to identify and address data drift after deployment. Factors like how users wear the devices, firmware updates, or changes in Bluetooth connectivity could impact data quality. Setting up safeguards to detect and resolve such issues is crucial for ongoing QSR compliance. Additionally, AIH LLC can use the FDA’s Q-Submission Program to get early feedback on validation methods, especially for new features like the aiNeuro device’s preemptive stroke monitoring capabilities.

Conclusion: Meeting Data Integrity Requirements for AI Medical Devices

Data integrity is at the heart of patient safety when it comes to AI-powered medical devices. As the number of authorized AI devices grows, manufacturers are under mounting pressure to adopt strong data management practices. These practices must ensure accuracy, reliability, and fairness throughout the entire lifecycle of a device.

The FDA has laid out a clear framework to help manufacturers maintain data integrity. One key component is Predetermined Change Control Plans (PCCPs), which allow pre-approved updates to devices while upholding safety standards. The FDA has since expanded the PCCP framework to cover all software functions in AI-enabled devices, enabling continuous improvements without compromising oversight.

"The recommendations in this guidance are intended to support iterative improvement through modifications to AI-enabled devices while continuing to provide a reasonable assurance of device safety and effectiveness." – FDA

Additionally, Good Machine Learning Practices (GMLP) emphasize ongoing monitoring and proactive risk management. GMLP Principle 10 specifically addresses data-related risks, requiring manufacturers to continuously monitor deployed models and manage retraining risks effectively. These measures create a strong foundation for reliable AI device performance.

For AIH LLC’s aiSpine and aiRing devices, these principles ensure transparent data handling, rigorous performance validation, and early identification of potential issues. By prioritizing data integrity, manufacturers not only protect patient outcomes but also align with regulatory expectations. Together, these practices and guidelines foster safer, more effective AI-enabled medical devices.

FAQs

How does the TPLC approach change FDA oversight for AI devices?

The Total Product Lifecycle (TPLC) approach strengthens FDA oversight of AI devices by emphasizing rigorous testing, validation, and continuous monitoring at every stage of the device’s lifecycle. This process covers pre-market validation, ensuring the device meets safety and performance standards before approval, as well as ongoing post-market evaluations to monitor real-world performance. Additionally, it incorporates adaptive oversight to address potential issues, such as safety risks or biases, ensuring the device remains reliable and effective over time.

What should a PCCP include to allow AI updates without a new submission?

A Product Control and Change Plan (PCCP) should clearly outline the scope of modifications and establish how these changes will be assessed. This ensures that updates to AI systems can move forward without needing a fresh submission. The plan should specify the types of changes expected and detail the methods used to evaluate their impact on both safety and performance.

How can manufacturers detect and manage data drift after deployment?

Manufacturers can tackle data drift by implementing continuous monitoring strategies, regularly evaluating real-world performance, and prioritizing transparency while addressing bias throughout the product lifecycle. These steps align with FDA recommendations on lifecycle management and post-market surveillance for AI-driven medical devices, ensuring data accuracy and device dependability over time.