Wearable devices are no longer just fitness trackers – they now use AI to customize their interfaces based on how you interact with them. AI processes data from sensors like ECG, PPG, and accelerometers to make instant changes that suit your needs. These adjustments include resizing buttons, reorganizing menus, and timing notifications better, all without requiring you to tweak settings manually. By combining real-time data processing with models like Deep Q-Learning, wearables have become smarter, faster, and more user-friendly.

Key takeaways:

- AI uses sensors to monitor your health and behavior, like heart rate or movement.

- Interfaces adjust automatically for better usability (e.g., larger buttons for motor challenges).

- Real-time processing ensures quick responses, even during activities like running.

- Devices like aiSpine and aiRing focus on health improvement, such as posture correction and tracking vital signs.

This technology is reshaping how wearables support health management, offering faster responses and more accurate insights tailored to individual needs.

Sentient Design: AI and Radically Adaptive Interfaces

sbb-itb-44aa802

How AI Detects and Interprets User Behavior and Health Data

Modern wearables are built around a network of sensors that monitor everything from your heart’s electrical activity to the composition of your sweat. Together, these sensors create a real-time picture of your health and daily activities. This constant flow of data is what powers the advanced AI systems described below.

Sensor Integration in Wearable Devices

Wearable devices are equipped with a variety of sensors that work together to track both physical and physiological metrics. For example:

- Physiological sensors like ECG electrodes detect your heart’s electrical signals, while PPG sensors use light to measure blood oxygen levels and blood volume changes.

- Inertial Measurement Units (IMUs) – which include accelerometers, gyroscopes, and magnetometers – track movement, posture, and gait.

- Biochemical sensors analyze sweat to measure glucose, lactate, cortisol, and electrolyte levels, all without invasive methods.

These sensors generate an enormous amount of time-stamped data, capturing everything from your internal health to external behaviors throughout the day.

Real-Time Data Processing with AI

Once the sensors collect raw data, it’s cleaned and processed using advanced AI models like CNNs, LSTMs, or Transformers. These systems achieve impressive accuracy – 96.1% classification accuracy with just 30 milliseconds of latency. By using edge AI, which processes data directly on the device rather than in the cloud, response times improve by 85% compared to traditional cloud-based systems. This speed can be life-saving in time-sensitive situations, such as detecting arrhythmias or falls.

Combining Multiple Sensor Inputs for Better Accuracy

By merging data from multiple sensors, AI can deliver more reliable health insights. For instance, combining PPG sensor data with accelerometer readings helps distinguish whether an elevated heart rate is due to exercise, stress, or a medical issue. Similarly, pairing ECG with phonocardiogram (PCG) signals improves glucose monitoring, while integrating PPG, accelerometer, and sound sensors enhances sleep quality assessments.

This multimodal approach has significantly improved the accuracy of wearable devices. AI-powered systems using combined sensor data have reduced false alarms by 35% compared to single-sensor monitoring. The result? Devices that not only monitor what your body is doing but also understand the reasons behind those changes. This deeper insight ensures that the device’s responses align with your actual health and activity.

How AI Adjusts Wearable Interfaces in Real Time

AI takes wearable technology to the next level by using real-time sensor data to make instant adjustments to your device’s interface. These changes happen automatically, ensuring that the device becomes more user-friendly exactly when you need it.

Interface Elements That AI Can Adjust

AI fine-tunes various interface elements based on your behavior and environment. For instance, text size and screen contrast adapt to improve readability in different lighting conditions or to assist users with visual impairments. Button sizes and placement shift according to Fitts’ Law, making controls easier to use for individuals with motor difficulties. Even notifications are optimized – if your calendar suggests you’re in a meeting, the device can switch to silent mode without you lifting a finger. Similarly, menu hierarchies reorganize themselves based on your most-used features, cutting down the time it takes to access common functions.

A fascinating example comes from UC San Diego, where researchers developed a deep-learning soft patch to filter motion noise in real time. This breakthrough enables gesture controls to work reliably, even in chaotic environments:

"By integrating AI to clean noisy sensor data in real time, the technology enables everyday gestures to reliably control machines even in highly dynamic environments." – Xiangjun Chen, Postdoctoral Researcher, UC San Diego

Another study published in Nature Scientific Reports highlighted an adaptive UI model that used Deep Q-Learning (DQL) enhanced by Golden Jackal Optimization (GJO). The system achieved a task completion time of 82 seconds, an error rate of just 9.9%, and a user satisfaction score of 78%. Notably, the AI model converged in only 45 training epochs, outperforming standard models that required 70 epochs.

| Interface Element | AI Adjustment Mechanism | User Benefit |

|---|---|---|

| Text/Contrast | Auto-scaling for visual needs | Easier reading, especially for older users |

| Menu Hierarchy | Analysis of usage frequency | Faster access to frequently used features |

| Notifications | Predictive timing models | Alerts arrive at non-disruptive moments |

| Button Size | Spatial adjustments based on Fitts’ Law | Fewer errors for users with motor challenges |

| Screen Content | Context-aware prioritization | Tailored focus on health or productivity data |

These real-time adjustments ensure wearables not only meet your immediate needs but also evolve with your usage patterns.

How Wearables Learn and Adapt Over Time

Wearable devices don’t just react – they learn. AI-driven systems like Deep Q-Learning refine their adjustments over time by analyzing your interaction data. For instance, if you frequently dismiss morning workout reminders but respond to them later in the day, the system will shift the timing to better suit your routine.

This learning process is accelerated by transfer learning, which uses insights from broader user data to personalize your experience faster – sometimes within just 24 hours of use. Combining large-scale models for general trends with smaller, on-device models for individual nuances creates a feedback loop. This "digital twin" of your health simulates various scenarios to provide personalized recommendations, improving both your health management and your overall experience.

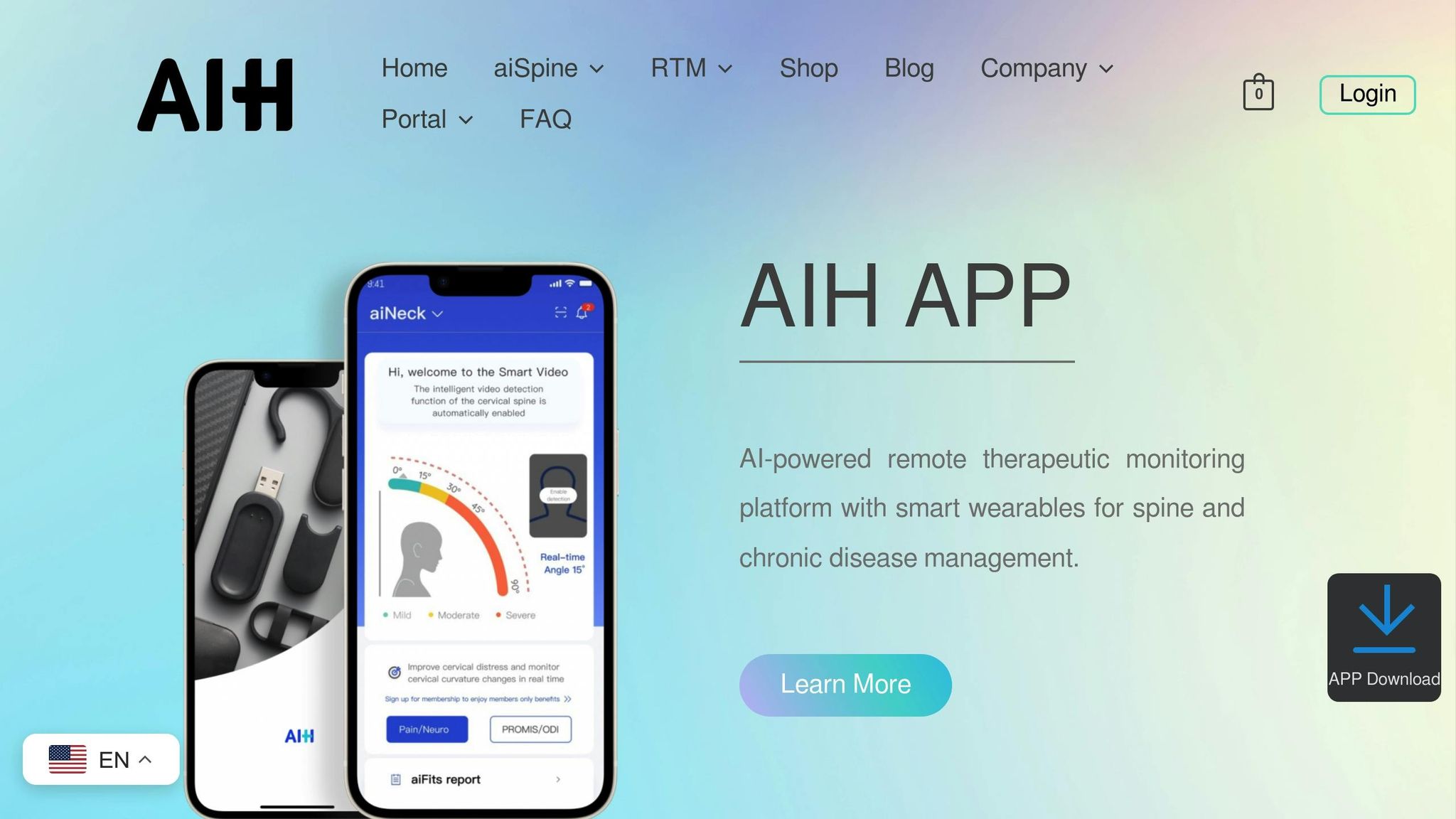

AIH LLC Devices: aiSpine and aiRing

AIH LLC has put these principles into practice with their innovative devices, aiSpine and aiRing. The aiSpine focuses on posture correction, using real-time tracking to detect slouching and delivering gentle vibration reminders to encourage proper alignment. On the other hand, the aiRing monitors vital signs like heart rate and blood oxygen levels, dynamically adjusting its interface based on your activity. For example, during workouts, it emphasizes heart rate data, while during rest, it shifts to sleep metrics.

Both devices sync with the AIH Health App, which acts as a centralized hub for real-time tracking and feedback. The app prioritizes the most relevant information on the main screen and organizes historical data for easy access. Additional features like multiple wearing modes, a 7-day standby battery for aiSpine, waterproofing for aiRing, and seamless Bluetooth connectivity ensure that these devices adapt to your health needs throughout the day.

Maintaining Accuracy in Real-World Conditions

Wearables rely on advanced AI techniques to stay precise, even when faced with the unpredictability of daily life. From the jostling of a morning jog to the vibrations of a moving car, these devices are designed to provide accurate sensor readings no matter the situation. This real-time adaptability allows AI to adjust interfaces seamlessly, ensuring reliable performance during all kinds of activities.

Noise Reduction and Signal Filtering

AI systems excel at separating meaningful health signals from the "noise" of real-world motion. By training on diverse datasets, these systems learn to identify consistent gestures or health patterns amidst the chaos rather than trying to eliminate all background interference.

One standout method is Adaptive Bayesian Filtering, which dynamically learns how to differentiate signals from noise. This approach has been shown to reduce the Root Mean Square Error from 2.84 to 1.21 bpm and the mean absolute relative error from 3.46% to 1.36%. By using windowed analysis, the system adapts to fluctuating signal-to-noise ratios, offering smoother results compared to static methods.

"Robustness in wearable sensing now arises not from isolating devices from their surroundings but from training systems to interpret them." – Chi Hwan Lee, Professor and Researcher, Purdue University

AI also employs contextual anomaly detection to distinguish between normal physiological changes and actual health concerns. For example, if your heart rate spikes during a workout, the system cross-references activity data to recognize this as expected rather than flagging it as an issue. These filtering techniques ensure that wearables maintain their accuracy, even in dynamic environments.

Maintaining Accuracy During Movement

Beyond filtering out noise, AI tackles the complexities of movement head-on. Activities like walking or running pose significant challenges, but specialized techniques ensure wearables remain accurate. For instance, sliding-window mechanisms use overlapping timeframes – 1-second windows with 0.25-second steps – to capture transitional movements with precision. This method allows devices to adapt seamlessly to user motion.

In a study conducted in November 2025, researchers at UC San Diego achieved over 93% gesture recognition accuracy using sliding-window techniques combined with transfer learning. Transfer learning is particularly effective, boosting recognition accuracy for new users from around 51% to more than 92% with just two samples per gesture.

For wearables like the aiRing, which monitors vital signs, maintaining accuracy during movement is critical. AI-driven smart textiles, employing methods like Kalman filtering and adaptive thresholding, have reduced errors in physiological monitoring by up to 25%. These systems have achieved Pearson correlation coefficients as high as 0.96 for heart rate and 0.99 for hydration levels when compared to medical-grade devices. This means your wearable can deliver hospital-level accuracy, even while you’re on the go.

Benefits of Adaptive Interfaces for Users

AI-powered adaptive interfaces elevate wearables from simple tracking tools to intelligent health companions. By responding to individual needs and behaviors in real time, these systems offer practical advantages for diverse users – whether managing chronic conditions or navigating physical and cognitive challenges.

Improved Accessibility for Users with Disabilities

Adaptive interfaces are breaking down barriers for the billions of people who rely on assistive products. By 2050, this number is expected to grow to 3.5 billion. Unlike traditional systems that rely on static settings, AI models like Deep Q-learning dynamically adjust elements such as button placement, navigation flow, and notification timing to suit user needs. For individuals with motor impairments, these systems leverage concepts like Fitts’ Law to resize and reposition interface elements, making devices easier to use.

Advancements in visual and sensory support have also been transformative. For example, in December 2024, researchers at the University of Michigan introduced WorldScribe, a generative AI tool that uses camera input to provide live audio descriptions. During testing, it helped a blind user locate a cat by describing, "A pale cream-colored cat… sits atop a white desk". Similarly, tools like SoundWatch translate environmental sounds – such as doorbells, sirens, or microwave beeps – into haptic feedback, offering a new level of independence for the deaf and hard-of-hearing community.

"People with disabilities experience the world very differently… Unfortunately, this is not reflected in mainstream design principles. Most new technologies and user interfaces are made for the average, non-disabled person." – Dhruv Jain, Assistant Professor of Computer Science and Engineering, University of Michigan

These accessibility innovations not only improve usability but also pave the way for personalized experiences, discussed in the next section.

Personalization Without Complexity

Adaptive interfaces simplify and enhance personalization. Traditional systems often require users to sift through endless settings, but AI-powered systems learn and adjust automatically. Techniques like few-shot learning can understand preferences with as few as 3 to 10 data samples.

One standout example is WatchGuardian, a smartwatch system introduced in February 2025. It allows users to define personalized interventions for specific habits. In a study involving 26 participants, the system achieved an impressive 87.7% accuracy in detecting custom actions using just ten examples, reducing undesirable behaviors by 64% – a 29% improvement over rule-based systems. To avoid overwhelming users with notifications, these systems use debouncer mechanisms to deliver alerts only during "Goldilocks Time Windows", ensuring feedback arrives when it’s most helpful.

This seamless personalization ensures that wearables adapt to your needs without adding complexity to your daily routine.

Better Health Management Outcomes

By combining accessibility and personalization, adaptive interfaces contribute to improved health outcomes. For individuals managing chronic conditions like diabetes or heart disease, these systems analyze behavioral patterns in real time to provide timely interventions. During critical moments, such as a hypoglycemic episode, the interface can prioritize essential functions – like quick-access emergency contacts – while temporarily disabling less urgent features.

Devices like AIH LLC’s aiSpine and aiRing showcase how adaptive interfaces enhance health management. The aiSpine learns from movement patterns to offer precise posture correction and back pain relief, while the aiRing uses smart textile sensors to monitor vital signs with near-medical-grade accuracy, achieving correlation coefficients of 0.96 for heart rate and 0.99 for hydration levels.

AI-powered alert systems further refine health monitoring by reducing false alarms by 35%. This level of precision ensures that alerts signal genuinely critical health events, particularly in cardiac monitoring, where distinguishing between normal and concerning variations can save lives. Additionally, edge AI processing accelerates response times by 85% compared to cloud-based systems, ensuring crucial health information reaches users instantly.

"Designing for people with disabilities not only benefits them but also drives better technology for everyone." – Dhruv Jain, Assistant Professor of Computer Science and Engineering, University of Michigan

Measuring the Performance of Adaptive Wearable Interfaces

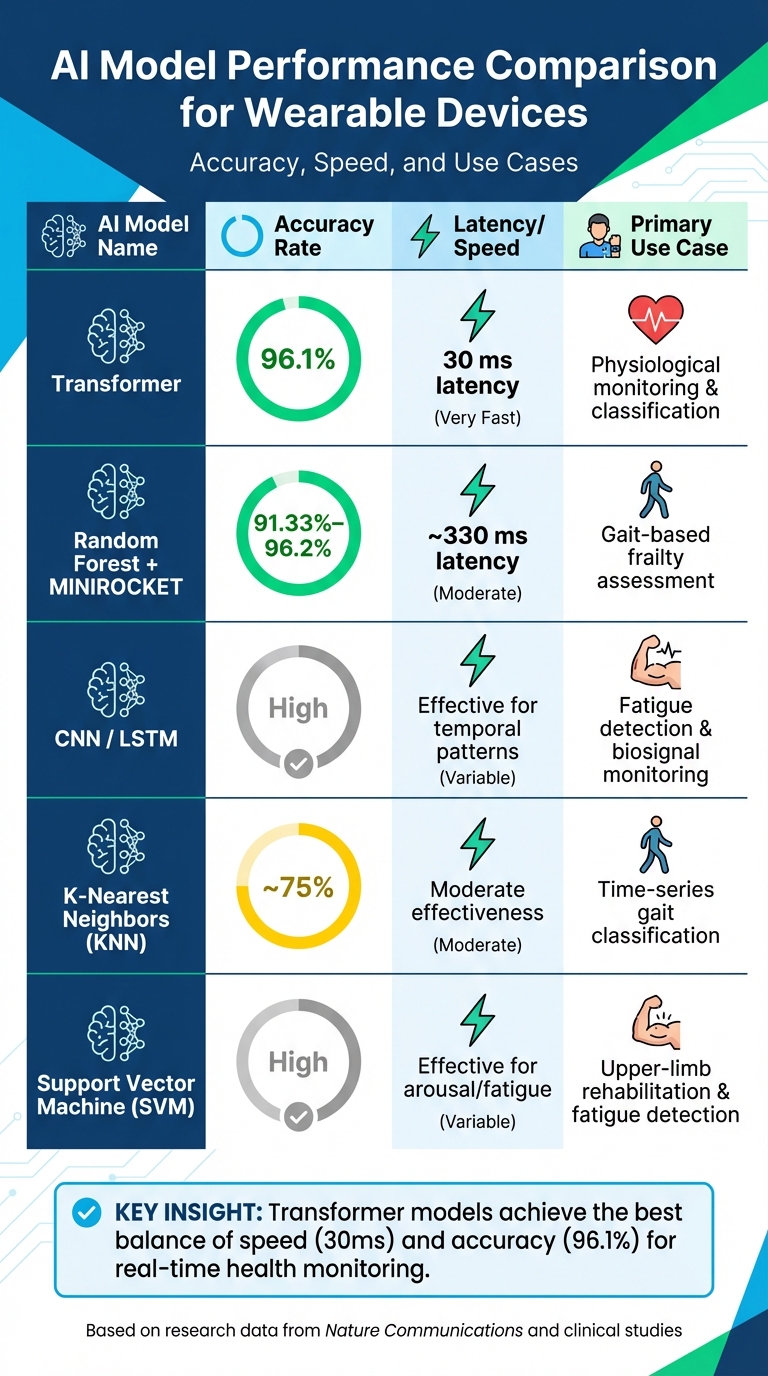

AI Model Performance Comparison for Wearable Devices

To make sure adaptive wearable interfaces provide real-time benefits, it’s crucial to measure their performance accurately. Metrics like detection accuracy, system latency, operational efficiency, and user satisfaction play a key role in ensuring timely and effective health interventions.

Key Performance Metrics

One of the most important factors is detection and classification accuracy – how well the system identifies health states, fatigue levels, or behavioral patterns. For example, transformer models have achieved an impressive 96.1% classification accuracy with a latency of just 30 milliseconds, making them excellent for real-time health monitoring. Similarly, on-device machine learning used for frailty assessment has shown an accuracy of over 90% when differentiating between healthy and pre-frail gait patterns.

System latency, which measures the time it takes for the interface to respond after collecting raw data, is another critical metric. Quick responsiveness is essential because delays can reduce the impact of just-in-time health interventions.

Operational efficiency focuses on how well the system manages resources. For instance, reinforcement learning–based adaptive sampling has been shown to cut power consumption by 50% while maintaining monitoring accuracy. On-device AI processing further reduces data transmission by nearly 99% compared to cloud-based systems, which not only extends battery life but also enhances data privacy.

"Performing on-device ML-based data analysis significantly condenses these large datasets, transforming weeks of continuous analysis into easily digested trends that can be transmitted with minimal bandwidth." – Nature Communications

User experience metrics assess subjective aspects, such as workload (measured using tools like NASA-TLX), user satisfaction, and engagement. A real-world example is the NAMI architecture, deployed in October 2025 with 100 postgraduate students. By integrating EEG and physiological signals to assess cognitive load in real time, it significantly improved task performance and reduced perceived workload. Clinical outcomes, such as better rehabilitation results or fewer false alarms, also serve as strong indicators of the benefits of adaptive interfaces in health management.

Each of these metrics highlights how different AI models balance speed, accuracy, and energy efficiency, depending on the specific application.

Comparing Different AI Models

Various AI architectures approach these metrics differently, offering unique strengths for specific use cases. Transformer models excel in real-time physiological monitoring, combining high accuracy with minimal latency. Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks are particularly effective for analyzing temporal patterns, making them ideal for tasks like fatigue detection or continuous biosignal monitoring.

For gait-based frailty assessment, combining Random Forest models with MINIROCKET produced accuracy rates of 91.33%–96.2%, with a latency of approximately 330 milliseconds after optimization. This method also reduced energy consumption by 21% compared to off-device processing. On the other hand, K-Nearest Neighbors (KNN) models, which typically achieve around 75% accuracy, are better suited for simpler classification tasks rather than critical health applications.

| AI Model | Accuracy | Latency/Effectiveness | Primary Use Case |

|---|---|---|---|

| Transformer | 96.1% | 30 ms latency | Physiological monitoring & classification |

| Random Forest + MINIROCKET | 91.33%–96.2% | ~330 ms latency | Gait-based frailty assessment |

| CNN / LSTM | High | Effective for temporal patterns | Fatigue detection & biosignal monitoring |

| K-Nearest Neighbors (KNN) | ~75% | Moderate effectiveness | Time-series gait classification |

| Support Vector Machine (SVM) | High | Effective for arousal/fatigue | Upper-limb rehabilitation & fatigue detection |

Reinforcement Learning (RL) models add another layer of optimization by using adaptive strategies to improve system control. For example, RL-based adaptive sampling achieved a 50% reduction in power consumption while maintaining high monitoring accuracy. Ultimately, the choice of AI model depends on the specific needs of the application – whether the focus is on speed, precision, energy efficiency, or adapting to individual user patterns.

Conclusion

AI-powered interfaces are reshaping wearable technology, turning these devices into proactive health companions. By learning from user behavior, they can deliver personalized interventions that address potential health issues before they escalate. With cutting-edge models offering high accuracy and low latency, these wearables meet the dual demands of real-time responsiveness and clinical-grade precision.

This shift from static, rule-based monitoring to dynamic, self-learning systems represents a major step forward for managing chronic diseases and improving accessibility. For example, AI-driven wearables now reduce power consumption by up to 50% using adaptive sampling techniques. They also simplify complex health data into clear, actionable insights, making it easier for users to understand their health. For those living with chronic conditions or disabilities, these devices go even further, tailoring display elements, notifications, and interaction methods to fit individual needs and contexts.

"Wearable technology is shifting from simply displaying data to becoming an active partner in decision-making." – Avantika, Technology Enthusiast

AIH LLC’s aiSpine and aiRing serve as excellent examples of this evolution. These devices, paired with the AIH Health App, use adaptive AI to monitor posture and vital signs in real time. They continuously learn from users, creating a highly personalized experience that aligns with each person’s unique health patterns and daily routines.

As the market for AI-powered wearables is expected to surpass $39 billion by 2026, the focus is moving beyond just data collection. The real value lies in transforming this data into meaningful insights. Innovations like edge computing, federated learning for privacy, and large language models for conversational health coaching are driving this change. These advancements ensure that wearable technology becomes more inclusive, offering personalized health management for everyone, regardless of their technical skills or physical abilities. This marks an exciting step toward a future where wearable devices provide tailored support for every user.

FAQs

How does a wearable decide what to change on the screen in real time?

Wearable devices leverage AI algorithms, such as deep Q-learning, to interpret user behavior and physiological data. This enables the device to adjust its interface dynamically, offering tailored and efficient interactions driven by real-time analysis.

Does on-device (edge) AI improve privacy compared with cloud processing?

On-device, or edge AI, improves privacy by handling data directly on the device itself. This approach eliminates the need to transmit sensitive health information to external servers, significantly lowering the risk of data breaches or unauthorized access.

How do wearables stay accurate when I’m moving or exercising?

Wearables stay accurate during movement or exercise by relying on AI algorithms to handle and filter out noisy sensor data in real time. This allows them to deliver dependable gesture recognition and health tracking, even when intense motion creates interference.