When wearable tech tracks your health 24/7, it raises big questions about privacy, fairness, and data security. Devices like aiSpine and aiRing are reshaping chronic disease management by spotting early health risks. But who owns the data? Can algorithms avoid bias? And how are your rights protected?

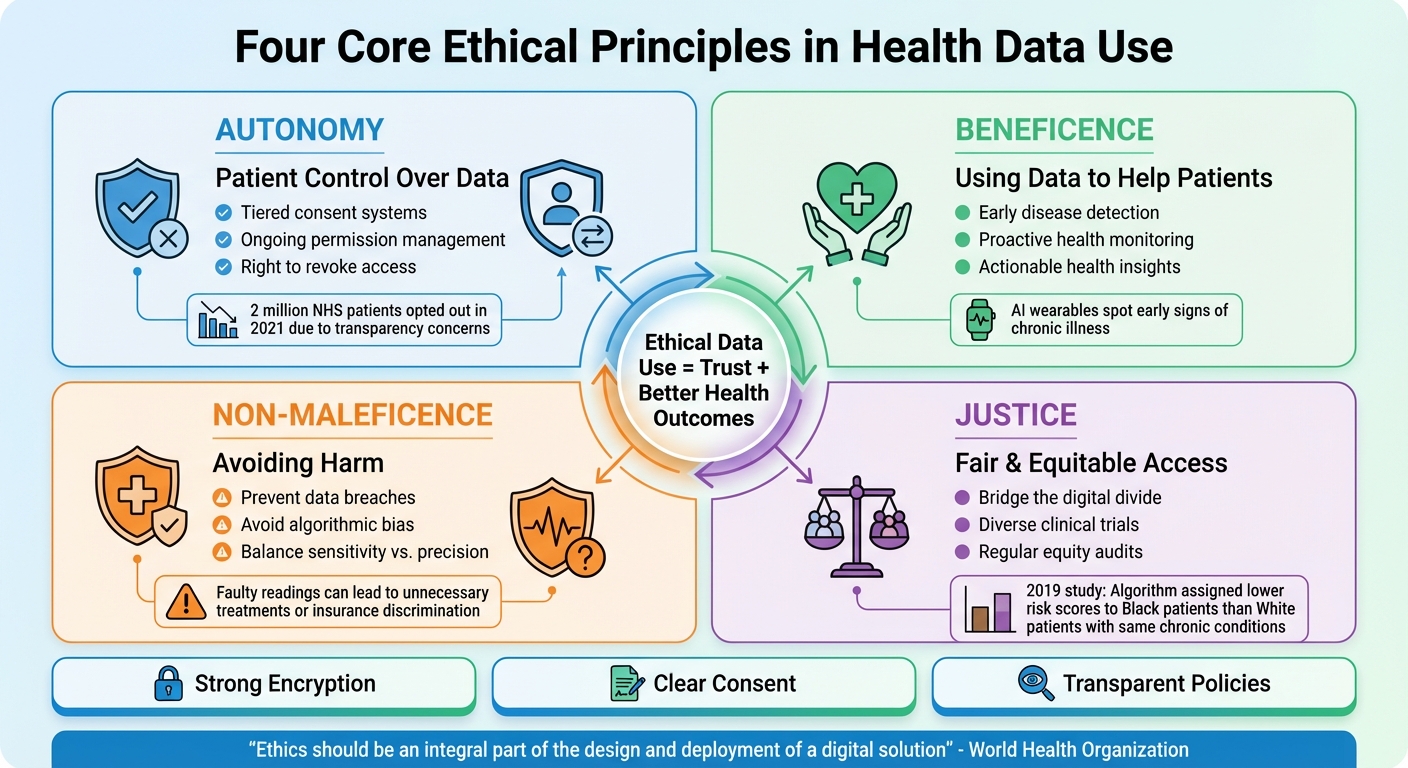

Here’s the bottom line: Ethical use of health data depends on four key principles: autonomy, beneficence, non-maleficence, and justice. This means giving you control over your data, ensuring it’s used to help (not harm), and designing systems that work for everyone – not just those who can afford them. Strong encryption, clear consent, and transparent policies are essential for trust. But the tech must also tackle bias and ensure equal access to avoid worsening health disparities.

The stakes are high. Mismanaged data can lead to discrimination, inflated insurance premiums, or loss of trust. Solutions like tiered consent, privacy-by-design, and regular algorithm audits can protect your data and promote fairness. The future of chronic disease monitoring depends on balancing innovation with ethical responsibility.

Four Core Ethical Principles for Health Data Use in Chronic Disease Monitoring

AI and Wearables: The Ethical Crisis Nobody’s Talking About

sbb-itb-44aa802

Core Ethical Principles in Data Use

When wearable devices track your heart rate, posture, or glucose levels around the clock, four core ethical principles should guide how that data is collected, stored, and used: autonomy, beneficence, non-maleficence, and justice. These aren’t just theoretical ideas – they directly impact whether chronic disease monitoring systems respect individual rights, improve health outcomes, and treat all users fairly.

Respecting Patient Autonomy

Patient autonomy means you have control over your health data. It’s not enough to give a blanket approval once – users need clear, specific, and ongoing options to manage their data. For instance, you might want to share your heart rate data with your doctor but keep your location data private. Just as importantly, you should be able to revoke that permission whenever you choose.

This isn’t a hypothetical concern. In 2021, England’s NHS introduced the General Practice Data for Planning and Research (GPDPR) initiative. The result? Two million patients opted out due to fears about transparency and potential commercialization of their data. This backlash highlights what happens when people feel their control over personal information is slipping away. To address this, platforms need to move away from "all-or-nothing" consent models and adopt tiered consent systems that let users decide which data they’re comfortable sharing and for what purpose.

"Users… face a seemingly impossible task to retain control over their data due to the scale, scope and complexity of systems that create, aggregate, and analyse personal health data." – MDPI Information

Ensuring Beneficence and Non-Maleficence

Beneficence means using data to help patients, while non-maleficence focuses on avoiding harm. For example, AI-powered wearables like the aiSpine posture monitor or aiRing vital signs tracker can spot early signs of chronic illnesses. This proactive approach gives patients a better chance to prevent or manage conditions early on.

But the potential for harm is real. Faulty readings, false alarms, or data breaches can lead to unnecessary medical treatments, anxiety, or even discriminatory practices by insurers. A major concern is algorithmic bias. If an AI system is trained using data from a narrow demographic, it could produce inaccurate or harmful results for underrepresented groups. Developers must carefully tune these systems to balance sensitivity – avoiding missed health warnings (false negatives) – with precision, so they don’t overburden healthcare systems or cause unnecessary panic.

"The H-IoT must be designed to be technologically robust and scientifically reliable, while also remaining ethically responsible, trustworthy, and respectful of user rights and interests." – MDPI

Promoting Justice in Healthcare

Justice in healthcare means ensuring fair and equitable access so that monitoring technologies benefit everyone, not just those who can afford high-end devices or live in areas with excellent connectivity. Without intentional design, wearables could deepen the "digital divide". People in underserved communities may face barriers like cost, lack of insurance coverage, or limited digital literacy, making it harder for them to access these tools.

Algorithmic bias also threatens fairness. In a 2019 study published in Science, researchers led by Ziad Obermeyer uncovered a troubling issue: a widely used commercial health algorithm assigned lower risk scores to Black patients than White patients with the same number of chronic conditions. Why? The algorithm used healthcare costs as a stand-in for health needs, excluding many Black patients from programs designed for high-risk individuals.

To ensure justice, developers need to take proactive steps: recruit diverse participants for clinical trials, regularly audit AI systems for equity, and use datasets that capture a wide range of ages, genders, and ethnicities. Without these measures, wearable technologies meant for chronic disease monitoring could end up reinforcing existing health inequalities instead of addressing them.

Building on these principles, the next section explores how clear consent protocols and transparent data policies can empower patients and protect their rights.

Patient Consent and Data Transparency

Wearing a device that tracks your posture or monitors your heart rate means your data becomes part of a system where it’s processed, stored, and sometimes shared with healthcare stakeholders. This highlights the pressing need for ethical data practices in managing chronic illnesses. Unfortunately, users often remain unaware of where their data goes or how it’s used. A 2024 review of 25 digital health consent forms found that the highest compliance rate was only 73.5%. Shockingly, over 90% of the forms failed to include critical information, such as protocols for data breaches or risks of re-identification.

Informed Consent in Wearable Devices

True informed consent goes beyond a one-size-fits-all checkbox; it means giving users ongoing, specific control over their data. Instead of requiring users to agree to broad data-sharing terms all at once, platforms should offer more detailed options. For instance, someone using the aiSpine posture monitor might choose to share spinal alignment data with their physical therapist while keeping their location data private. Others might agree to let their data support clinical care but not commercial AI development.

This concept of tiered consent is gaining momentum. In August 2025, researchers introduced the Standard Health Consent (SHC) platform, which organizes data into four categories – body health measures, lifestyle data, environmental data, and derived health insights. SHC also allows users to track how their data has been used over time.

"By replacing fragmented consent experiences with a single, structured interface, SHC empowers individuals to manage health data sharing across apps in a consistent and informed way." – Nature

Platforms like the AIH Health App, which integrates data from devices like aiSpine and aiRing, should focus on user-friendly designs. Instead of burying users in dense legal text, they could include clear visuals and plain-language explanations. For example, context-specific FAQs could define technical terms like “pseudonymization” or “data retention” in a way that’s easy to understand. This approach ensures users know exactly what they’re agreeing to.

This level of detail in consent naturally ties into the broader need for transparency in how data is handled.

Transparent Data Sharing Policies

Transparency in data sharing is just as crucial as informed consent. But it’s not a one-and-done effort – it requires an ongoing commitment to keep users updated about how their data is collected, stored, and shared. Many platforms fall short in this area. A survey of 222 mobile app families for wellness devices revealed that 64.4% failed to disclose whether they shared data with other apps or services, creating "data silos" that obscure the flow of information.

When platforms fail to communicate clearly, trust erodes. It’s critical that users understand what data is collected, who controls it, where it’s stored, and which third parties might access it.

For example, platforms should clearly identify the "data controller" – the entity legally responsible for managing the information – and specify whether data is stored on physical servers or in the cloud. Devices like aiRing, which use AI algorithms to monitor vital signs, should include labels explaining how the AI processes data and makes decisions. This builds confidence in the technology.

"Transparency can be seen as an auxiliary principle, but also as an essential precondition for enabling accountability, user trust, and regulatory compliance in AI systems." – Petar Radanliev, Department of Computer Science, University of Oxford

Dynamic consent models offer another solution. These systems re-engage users with full details whenever new data uses arise. For instance, if a platform initially collects data for clinical care but later wants to use it for public health research, users should be notified and given the choice to opt in or out.

The National Institute of Health’s "All of Us" Research Program serves as a powerful example. By February 2024, it had enrolled over 650,000 participants, including many from historically underrepresented groups. The program’s transparent dashboards clearly show how participant data is used, and its partnership with community organizations ensures multilingual materials are available, making transparency accessible to a broader audience.

For platforms managing chronic disease data, traceability and audit trails are non-negotiable. Providing users with a consent history log lets them review past actions, investigate possible data misuse, and make informed choices about future sharing. This approach not only fosters trust but also ensures compliance with regulations like the GDPR and the EU AI Act.

Protecting Privacy and Ensuring Data Security

Once users understand how their data is collected and shared, the next big question is: How is that data protected? In chronic disease monitoring, trust hinges not only on transparency but also on robust security measures. Without solid safeguards, even the most transparent systems can falter under threats like breaches or unauthorized access. For platforms managing sensitive health data – like spinal metrics from aiSpine or vital signs from aiRing – security isn’t just about compliance; it’s an ethical responsibility.

But here’s the catch: technology alone can’t solve this problem. The National Institute of Standards and Technology (NIST) emphasizes this in its Special Publication 1800-30: "Technology solutions alone may not be sufficient to maintain privacy and security controls on external environments. This practice guide notes the application of people, process, and technology as necessary to implement a holistic risk mitigation strategy". In simpler terms, protecting data requires teamwork – healthcare organizations, platform providers, and even patients must work together. This unified approach sets the stage for the technical safeguards we’ll dive into next.

Data Encryption and Security Protocols

Encryption forms the backbone of any strong security system, but it’s not a one-size-fits-all solution. Every stage of data handling must be encrypted. In February 2022, NIST’s National Cybersecurity Center of Excellence built a test ecosystem for Remote Patient Monitoring (RPM). Using the NIST Cybersecurity Framework and Zero Trust principles, they secured communication between patient homes and healthcare providers, tackling vulnerabilities in telehealth infrastructure.

AES-GCM is a standout encryption method. It provides authenticated encryption, ensuring both confidentiality and data integrity. In 2024, researchers from UC San Diego and the University of Wisconsin-Madison developed a secure smartwatch app using Android Studio’s crypto APIs. Their heart rate monitoring system encrypted data right at the sensor level using Wear OS APIs. The server then decrypted the data with a key retrieved from the cloud, maintaining security throughout the process.

For platforms like the AIH Health App, which pulls data from multiple devices, using session key protocols like ECDH (Elliptic Curve Diffie-Hellman) is critical. ECDH enables a wearable device and a cloud server to generate a unique session key without ever transmitting it directly. Paired with HTTPS and SSL/TLS protocols, this ensures end-to-end encryption – from the moment data is collected to when it’s stored in the cloud.

Zero Trust Architecture adds another layer of defense. Unlike traditional security models, Zero Trust doesn’t automatically trust anything inside the network. Instead, it continuously verifies every user and device, encrypting all access requests. This approach not only strengthens system security but also aligns with ethical principles like “do no harm.” For chronic disease monitoring systems, where data flows between homes, healthcare providers, and analytics platforms, Zero Trust is especially important.

| Security Layer | Protocol/Standard | Function |

|---|---|---|

| Data in Transit | AES-GCM | Authenticated encryption for confidentiality and integrity |

| Key Exchange | ECDH | Establishes a unique session key between device and cloud |

| Communication | HTTPS / SSL | Creates a secure channel for data transfer |

| Identity | RSA / Certificates | Authenticates the server and client via a certificate chain |

| Architecture | Zero Trust | Continuous verification of all users and devices |

These layers work together to create a robust defense system, but encryption alone isn’t enough. Data minimization is another key principle. Systems should only collect the data they truly need. For instance, if aiSpine is used for posture tracking, there’s no reason to gather location data or browsing history. By limiting the data collected, the potential fallout from a breach is significantly reduced. This approach not only protects patient data but also upholds ethical responsibilities to prevent harm.

Mitigating Risks of Data Breaches

Even with strong encryption, vulnerabilities can still surface. Remote patient monitoring systems are highly interconnected, involving healthcare providers, telehealth platforms, and patients’ home networks. Each participant in this ecosystem plays a role in safeguarding data.

Behavioral analytics can help spot threats early. By tracking unusual activities – like odd login times, unexpected data access patterns, or attempts to download large amounts of data – systems can flag potential breaches. For example, if a clinician who typically logs in during office hours suddenly accesses records at 3:00 AM and tries to download hundreds of files, the system can immediately flag this behavior for review.

Access controls and audit logs are another must-have. Strict access controls limit who can view sensitive health data, while audit logs track every instance of data access or modification. For platforms like aiRing, which continuously monitors vital signs, these logs should detail not just who accessed the data but also what actions they took and when. This ensures accountability and speeds up investigations if a breach occurs.

Multi-factor authentication (MFA) is another layer of protection. By requiring multiple forms of verification – like a password plus a fingerprint scan or a one-time code – MFA significantly reduces the risk of unauthorized access, even if a password is compromised.

Lastly, privacy by design ensures that systems are built with privacy as a default setting. This means features like automatic data anonymization, aggregation to prevent re-identification, and time-stamping to ensure accurate health monitoring logs are baked into the platform from the start. Users shouldn’t have to opt in to these protections – they should be standard.

Regulations like HIPAA, enforced by the U.S. Office for Civil Rights (OCR), and consumer protection laws from the Federal Trade Commission (FTC) set clear standards for health data security. Some data, like substance use disorder treatment records, face even stricter rules under 42 CFR Part 2. Platforms must also comply with the Health Breach Notification Rule, which mandates immediate patient notification in the event of a data breach.

Addressing Bias and Promoting Health Equity

Even the most secure systems can fail when fairness is overlooked. AI algorithms in chronic disease monitoring risk widening healthcare disparities if they’re based on biased data or fail to account for diverse populations. As of May 13, 2024, the FDA has approved 882 AI-enabled medical devices. However, a review of 48 healthcare AI studies revealed that 50% carried a high risk of bias, often due to missing sociodemographic data or unbalanced datasets. Beyond robust security, fairness and accessibility are essential for ethical chronic disease monitoring. For tools like aiSpine and aiRing, which track spine health and vital signs, addressing bias isn’t optional – it’s a responsibility.

The dangers of ignoring bias are clear. In 2019, a study led by Ziad Obermeyer examined a commonly used risk-prediction algorithm. The algorithm, applied to 43,539 White patients and 6,079 Black patients, used healthcare costs as a stand-in for illness severity. This approach meant Black patients had 26.3% more chronic illnesses than White patients at the same risk score. Recalibrating the algorithm to use direct health indicators – like chronic condition counts instead of costs – raised the enrollment of high-risk Black patients in care programs from 17.7% to 46.5%. This example highlights how proxy variables can distort true health status, perpetuating systemic inequalities.

Identifying and Reducing Bias in AI Algorithms

Bias doesn’t just happen – it’s often built into algorithms during development. The Total Product Lifecycle (TPLC) approach tackles this issue by addressing bias from the initial design phase to real-world implementation. At the design stage, it’s crucial to involve diverse expert teams to define problems accurately. During development, training data must include a wide range of demographics, such as age, gender, race, ethnicity, and skin tone. Validation should include subgroup statistical testing, also known as "accuracy disaggregation", which measures diagnostic performance across different demographic groups instead of relying on population-wide averages.

For example, wearable devices like aiRing, which monitor vital signs continuously, need to ensure that metrics like heart rate and oxygen saturation are accurate for all skin tones and body types. In 2024, the French government’s Health Data Hub gave the cardiac monitoring platform Implicity access to anonymized data from 3.7 million individuals. By leveraging a nationwide, single-payer database rather than data from urban academic centers alone, the project aimed to reduce selection bias and improve heart failure predictions across diverse groups.

"Advancing health equity should be a fundamental objective of any algorithm used in health care." – Marshall H. Chin, MD, MPH, JAMA Network Open

Regular equity audits are critical to address data drift and evolving societal norms. AI systems can experience "data drift" or "concept shift", where outdated training data becomes biased due to changes in clinical practices. For platforms like the AIH Health App, which integrates data from multiple devices, this means monitoring performance across demographic subgroups and regularly updating algorithms to address emerging disparities.

Improving Accessibility in Chronic Disease Monitoring

Improving AI models is just one piece of the puzzle. Ensuring these advancements reach everyone requires tackling accessibility challenges head-on. Fairness isn’t just about the algorithms – it’s also about who has access to the technology. The digital divide often leaves low-income users and those with limited digital literacy behind. To bridge this gap, developers need to create tools that work for people with varying technical skills and financial means. For instance, using advanced algorithms that perform well on more affordable devices can lower hardware costs.

Community engagement is key to identifying specific health needs and addressing cultural considerations. For a spine health tool like aiSpine, this might involve understanding how posture tracking differs for manual laborers versus office workers, or designing interfaces that are user-friendly for older adults with limited experience using smartphones.

Transparency also plays an important role. Tools like "model cards" or data labels can disclose what types of data were used for training and highlight any limitations in performance for specific groups. For chronic disease monitoring platforms, this means being clear about whether the technology has been validated across a range of clinical environments, including under-resourced or rural clinics.

| Area of Concern | Key Issue | Recommendation |

|---|---|---|

| Data Quality | Variability of sensors and lack of context | Establish local standards of data quality |

| Balanced Estimations | Overestimation and overprediction | Ensure interoperability of wearable data |

| Health Equity | Unequal access and digital divides | Ensure access to data and interpretation |

| Fairness | Exclusion of populations and unfair datasets | Improve representativity of wearable data |

Lastly, interoperability standards are crucial for integrating data across platforms. This reduces the risk of overestimations in health monitoring and ensures more accurate assessments across diverse populations. For example, data from aiRing can be seamlessly shared with electronic health records, giving clinicians a comprehensive view of a patient’s health, no matter their background.

Regulatory Compliance and Ethical Oversight

In the United States, strict regulatory standards and ethical oversight go hand in hand to ensure continuous health data monitoring is both safe and respectful of patient rights. For wearable devices like aiSpine and aiRing, which gather ongoing health data, navigating these requirements is key to earning trust and providing effective care.

US Regulatory Standards

HIPAA: The Backbone of Patient Data Protection

HIPAA (Health Insurance Portability and Accountability Act) is the foundation for safeguarding patient data in the U.S. Its Privacy Rule, finalized on December 28, 2000, after receiving over 52,000 public comments, sets national standards for protecting "protected health information" (PHI). This rule limits how PHI can be used or shared without patient consent, allowing exceptions only for specific purposes like treatment, billing, and healthcare operations.

For platforms like the AIH Health App, which consolidates data from multiple wearables, compliance means implementing safeguards to protect data and ensuring that shared information is limited to the "minimum necessary".

"A major goal of the Privacy Rule is to assure that individuals’ health information is properly protected while allowing the flow of health information needed to provide and promote high quality health care." – HHS.gov

FDA Oversight and Medical Device Regulation

The FDA also plays a critical role in regulating software that analyzes medical data. For example, tools like aiRing, which processes ECG signals, or aiSpine, which monitors posture patterns, fall under the definition of medical devices as outlined in Section 201(h) of the FD&C Act. However, the 21st Century Cures Act introduced exceptions for certain Clinical Decision Support (CDS) software. If a CDS tool allows healthcare professionals to independently evaluate the recommendations, it may not require FDA regulation.

The FDA’s Digital Health Policy Navigator is a useful resource for determining whether a software function qualifies as a regulated medical device.

| Feature | Non-Device CDS | Device-Regulated CDS |

|---|---|---|

| Data Input | Medical references (textbooks, guidelines, labs) | Medical images, signals (like ECG), or patterns (e.g., genomic data) |

| HCP Role | Allows independent review of recommendations | Provides definitive diagnosis or analyzes complex signals |

| FDA Oversight | Excluded under Section 520(o) | Requires FDA regulation and classification |

The FDA is also introducing a Predetermined Change Control Plan (PCCP) for AI/ML-enabled software. This approach allows for ongoing improvements to algorithms while ensuring safety and effectiveness. Additionally, in 2024, the FDA, Health Canada, and the UK’s MHRA released 10 guiding principles for Good Machine Learning Practice (GMLP) to support the safe development of AI/ML medical devices.

Regulations like these are complemented by ethical oversight, which ensures that patient protections are upheld throughout the development and use of health technologies.

Implementing Ethical Oversight Mechanisms

While regulations create the framework, ethical oversight ensures these rules are applied effectively. Tools like the ReCODE Health Digital Health Checklist help Institutional Review Boards (IRBs) and researchers address critical issues such as privacy, access, risk-benefit analysis, and data management. For platforms focused on chronic disease monitoring, this means embedding ethical considerations into every stage – planning, designing, testing, and even post-launch.

"We are living in a real-time experiment on balancing privacy and public health." – Camille Nebeker, Director of the Research Center for Optimal Digital Ethics (ReCODE), UC San Diego

Managing Risks in Predictive Models

For platforms using predictive models, Intervention Risk Management (IRM) is essential. This involves conducting thorough risk analyses, implementing mitigation strategies, and establishing governance systems to oversee decision-support interventions. The ONC HTI-1 rule, effective February 8, 2024, now requires developers to provide "source attributes" for predictive tools. This includes documenting how the intervention was developed, its performance metrics, and plans for ongoing updates.

Continuous monitoring is another key element. Standardized assessments of data quality help track outcomes, including impacts on health equity, and inform policy adjustments as new evidence emerges. Building trust is equally important. Strategies that return meaningful insights to participants – rather than just extracting their data – help establish a sense of partnership.

Involving diverse stakeholders – such as ethicists, legal experts, developers, and end-users – early in the process can help identify potential impacts on different populations. For companies like AIH LLC, which supports FDA certification applications, this collaborative approach ensures ethical considerations are woven into every phase of development and deployment.

Best Practices for Ethical Data Integration

Building Patient-Centric Platforms

Ethical data integration begins with user-inclusive co-design processes. A great example of this approach is the three-phase study led by Federation University in Australia (2023–2026). Researchers Colette Joy Browning and Amanda Ellen Young spearheaded the development of a digital health platform aimed at managing chronic diseases in rural areas. The project involved gathering 84 survey responses in Phase 1 and conducting 9 workshops in Phase 2. They utilized the Behavioral Intervention Technology (BIT) model and the NASSS framework to navigate challenges like workforce shortages and gaps in digital literacy.

Inclusive co-design ensures that platforms meet the diverse needs of users while keeping health tracking entirely voluntary. It’s crucial that systems respect user decisions without nudging or overriding them.

Another key principle is to collect only the data necessary for the platform’s stated purpose. There should be no commercial sale or secondary use of data without explicit user consent. The WHO’s SMART Trust framework emphasizes this, stating, “a digital solution should only be used for its intended purpose, as inappropriate uses may result in legitimate ones being undermined”. For instance, platforms like the AIH Health App, which integrates data from devices like aiSpine and aiRing, embed these principles into their design to foster trust and comply with regulations.

After building patient-centric platforms, ongoing evaluation is essential to address new challenges and ensure these systems remain effective.

Continuous Monitoring and Improvement

Once a patient-centric platform is in place, continuous monitoring is critical to maintaining ethical data practices. Post-implementation tracking helps measure outcomes and assess impacts on health equity. The WHO suggests creating independent oversight bodies to monitor data controllers and revoke processing privileges if ethical standards are breached.

To prevent and address disparities, regular bias audits and transparent documentation are a must. Tools like "datasheets" and model cards that disclose fairness metrics can help identify issues early. This is especially important considering the alarming rise in healthcare-related cyber incidents – hacking incidents have surged by 239% since 2018, with 725 breaches exposing over 133 million patient records in 2023 alone.

Platforms should also establish feedback loops that allow all stakeholders, especially marginalized groups, to participate in shaping policies. Providing users with meaningful access to their data – in formats they can actually use – goes beyond simply offering raw data dumps. For critical applications like rehabilitation or life-saving monitoring, it’s essential to create a "chain of liability." This involves assembling a team of clinicians, engineers, and legal experts to clearly define responsibilities in case of device failure.

Conclusion

Ethical data use goes beyond simply meeting regulations – it’s about fostering trust and advancing chronic disease monitoring. When platforms focus on transparency, respect for patient autonomy, and limiting data collection to what’s truly necessary, they create environments where people feel safe sharing sensitive health information. As the World Health Organization puts it, "Ethics should be an integral part of the design and deployment of a digital solution". Without this foundation, even the most advanced systems risk alienating patients and worsening health disparities.

This focus on ethics is increasingly critical as the digital health market continues its rapid growth. The wearable technology sector, for instance, has seen explosive development, showcasing the vast opportunities in digital health. But with growth comes responsibility. Ethical principles must guide every step of the data lifecycle – from collection and encryption to ensuring algorithms are transparent and free from bias. One major hurdle? Many digital health consent forms fail to provide clear, comprehensive information, complicating efforts to uphold ethical data practices.

Take AIH LLC as an example of how ethical design can lead the way. By integrating tools like aiSpine and aiRing with the AIH Health App, the company demonstrates a commitment to patient-centered care. Their approach respects individual autonomy while delivering actionable insights, showing how digital health solutions can complement – not replace – human healthcare.

As Camille Nebeker wisely urges, the digital health community must "move purposefully and fix things", rather than "move fast and break things". Upholding strong ethical standards ensures that these technologies empower patients and deliver fair, meaningful health outcomes. Ethical data use transforms chronic disease monitoring from a mere tracking system into a collaborative effort that benefits everyone involved.

FAQs

Who owns my wearable health data?

Ownership of wearable health data in the U.S. is largely dictated by the terms of service and privacy policies set by the device manufacturer. While users typically have the ability to access, review, or delete their data, companies often retain specific rights regarding how that data is used. This raises ethical questions about user control, especially when the data is shared with third parties. It’s also worth noting that regulations like HIPAA primarily govern healthcare providers, leaving standalone wearable devices outside its scope.

How can I share only some data and revoke consent later?

You can use a user-driven consent platform tailored for digital health applications to manage and share specific pieces of your health data. These platforms put you in control, letting you decide what information to share and offering the flexibility to update or withdraw your consent whenever you choose. This approach ensures your data is handled securely while meeting ethical guidelines for health information sharing.

How do these systems prevent AI bias and health inequity?

AI systems tackle bias and health inequity by proactively identifying and reducing biases during both their creation and use. Strategies include evaluating performance across varied demographic groups, adopting inclusive data practices, and integrating bias-reduction techniques throughout the AI’s lifecycle. Additionally, ethical governance frameworks, transparency, and active community involvement play a crucial role in promoting fairness. These efforts help ensure equitable access and outcomes in AI-driven chronic disease monitoring.